You checked your Google rankings this morning. Everything looks fine. But while you were doing that, thousands of users were asking ChatGPT, Perplexity, and Gemini which brand they should choose, and your name didn't come up once.

That's the gap most teams still can't see.

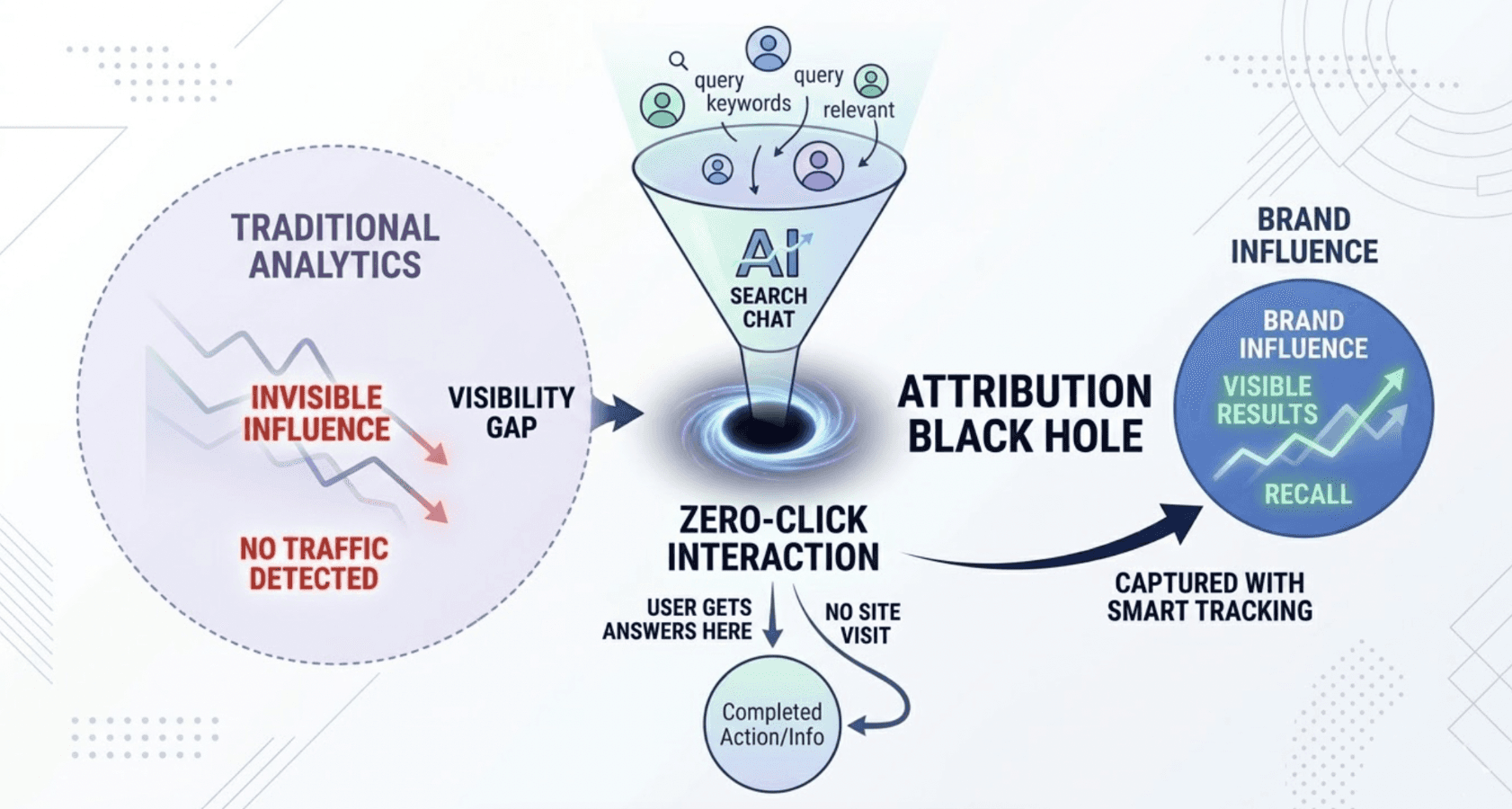

AI brand monitoring tracking is the practice of measuring how large language models represent, reference, and recommend your brand when users prompt them. It's not a replacement for traditional analytics. It's the layer your current stack is completely missing.

Traditional Brand Monitoring Has a Blind Spot You Can't Afford to Ignore

Tools like Google Alerts, Mention, and Brandwatch were built for a world where visibility meant "links on a page." Their logic is rule-based: count mentions, timestamp them, calculate Share of Voice. They tell you what happened after the fact.

AI platforms don't work that way. When someone asks ChatGPT "What's the best project management tool for a remote team?" the model doesn't retrieve a directory. It reasons through its training data and live search results to synthesize a specific recommendation. Your brand could have 50,000 social mentions and still be absent from that answer, because the model couldn't find enough structured evidence to justify including you.

That's the "attribution black hole" created by AI search: zero-click interactions where a user gets everything they need inside the chat interface without ever visiting your site. Traditional analytics never captures this. The traffic never shows up. The influence is invisible.

Feature | Traditional Brand Monitoring | AI Brand Monitoring Tracking |

|---|---|---|

Operational Logic | Rule-based, deterministic | Probabilistic, agentic |

Primary Goal | Count mentions and sentiment | Monitor inclusion and recommendation patterns |

Visibility Focus | SERPs | LLMs and answer engines |

Success Metric | Share of Voice | Visibility Rate and Authority Weight |

Data Stability | High (rankings change slowly) | Low (AI responses change ~70% of the time) |

The bottom line: if you're only running legacy monitoring, you're watching the wrong screen.

How AI Brand Monitoring Tracking Actually Works

The mechanics are different from anything in the traditional SEO toolkit.

Instead of tracking keyword rankings, AI brand monitoring tracks prompts, entire sentences that replicate how real users ask questions. "Which accounting software should an SME choose to automate invoices?" is not a keyword; it's an intent. And it's the unit of measurement that actually matters here.

The technical process involves four layers:

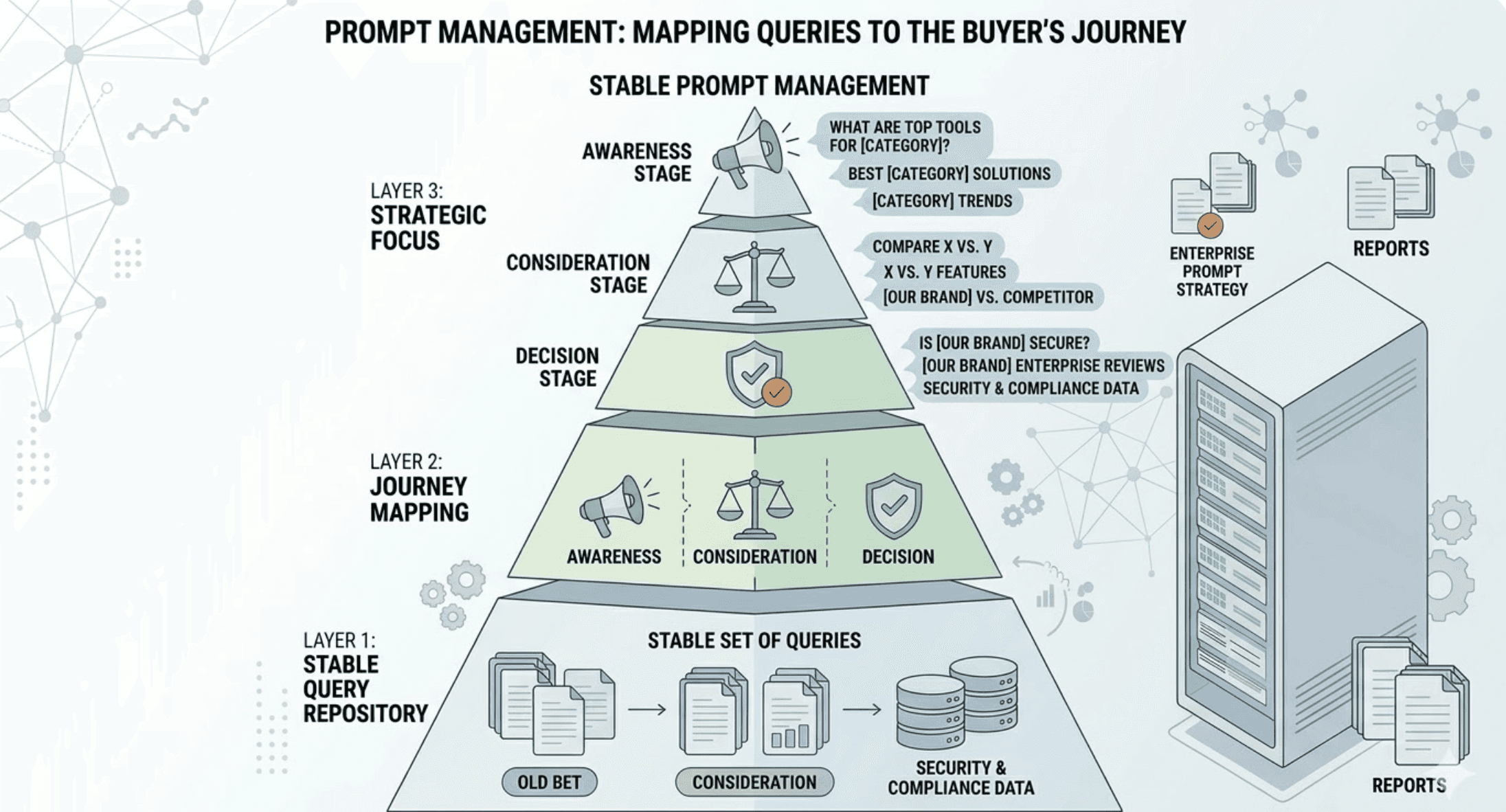

Prompt Management means identifying a stable set of queries mapped to your buyer's journey, from awareness ("What are the top tools for...") through consideration ("Compare X vs. Y") to decision ("Is [brand] secure enough for enterprise use?").

Multi-engine execution means running those prompts across ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews. Each model weighs brand authority differently. A result from one platform doesn't predict your result on another.

Response capturing pulls the generated text for analysis. There's a real technical tradeoff here: UI scraping simulates human behavior but often hits legacy model versions with outdated training data. API-native integrations are more reliable because they support "groundedness" analysis, using metadata to confirm whether the AI triggered a real-time web search to generate its answer, or was working from static training data alone.

Data normalization converts unstructured conversational responses into structured metrics: mention counts, prominence scores, sentiment flags, citation frequencies.

That last step is where most DIY attempts break down. You can't pull meaning from hundreds of long-form AI responses without a systematic framework. This is where an AI GEO platform monitoring setup becomes necessary, not optional.

The 5 Metrics That Actually Matter (And Why "Mentions" Is the Least Important One)

Raw mention counts feel reassuring. They're also the weakest signal in this stack.

1. Visibility Rate is the foundational metric. It measures how often your brand appears in AI responses across a defined set of tracked prompts. A visibility rate of 85% means that for every 100 relevant category queries, the AI mentioned your brand 85 times. This is your "Share of Mind" inside AI, and it's the number you optimize toward.

2. Sentiment Score measures how the AI characterizes your brand, not just whether it mentions you. AI models describe brands using adjectives that shape consumer perception: "trusted," "enterprise-grade," "expensive," "difficult to implement." NLP-based sentiment scoring tracks these characterizations over time. A mention that frames you as "a decent option for small teams" is very different from one that positions you as "the industry standard."

3. Position and Prominence reflect the fact that order is not random in synthesized answers. Being mentioned first in a list creates authority bias. A Prominence Score (0–10 scale) quantifies this: 8–10 means you led the response; 1–4 means you were an afterthought.

4. Source Coverage and Citation Share track which external domains AI is citing to validate its mentions of your brand. Reddit threads, industry roundups, Wikipedia, analyst blogs: these are the "trust nodes" AI leans on. If your competitors own those nodes, they'll consistently outrank you regardless of your website quality.

5. AI Search Volume estimates how frequently users are asking AI assistants about your category or brand. This is the hardest metric to calculate, but it's the most forward-looking one. It tells you where conversation demand is growing before it shows up in any traditional analytics tool.

Metric | What It Measures | Strategic Value |

|---|---|---|

Visibility Rate | Frequency of brand appearance | Baseline "Share of Mind" in AI |

Sentiment Score | Tone and characterization | Reputational risk or brand narrative success |

Position | Rank within multi-brand responses | Authority and recommendation priority |

Citation Share | Your domain citations vs. competitors | Which content is acting as the "source of truth" |

AI Search Volume | Estimated prompt frequency | Real-world demand within AI platforms |

AI GEO Platform Monitoring Setup: A 6-Step Checklist

Most brands stall here. They understand the concept but don't know where to start.

Step 1: Run a Baseline Audit Query ChatGPT, Perplexity, and Google AI Overviews with 20–30 high-intent category prompts. Don't optimize yet; just observe. Document which competitors are consistently appearing and what language the AI uses to describe them. This gives you your starting visibility rate and identifies the gaps.

Step 2: Fix Technical Access AI crawlers need frictionless access to your content. Update your robots.txt to explicitly allow bots like GPTBot and PerplexityBot. Implement an llms.txt file, a programmatic summary of brand facts designed for AI agents. Ensure critical content is server-side rendered, since many AI crawlers don't execute JavaScript reliably.

Step 3: Implement Structured Data Schema markup is the "technical DNA" that AI systems use to justify recommendations. Beyond basic product schema, implement FAQPage, HowTo, and Organization markup with official name, logo, and sameAs links to social profiles and any Wikipedia entries. This gives the model structured "evidence" it can cite.

Step 4: Restructure Content for AI Extraction AI models prefer "answer-first" architecture. Every major section should lead with a direct, factual answer of 40–60 words, followed by structured evidence: bullet points, numbered lists, comparison tables. Burying your key claims under SEO preamble is a fast way to get deprioritized.

Step 5: Build Third-Party Consensus AI models rely on external consensus to validate recommendations. That means active participation in Reddit threads, updated Wikipedia and Wikidata entries, and placements in industry "Best of" roundups. These third-party citations are often more influential than your own website content.

Step 6: Deploy Continuous AI Monitoring Set up a dedicated platform to track visibility shifts over time. Topify is built specifically for this: it monitors brand performance across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms, tracking all five metrics above in a single dashboard. Connect LLM referral traffic (from domains like chatgpt.com and perplexity.ai) into GA4 with custom segments so you can correlate AI visibility changes with actual business outcomes.

4 Mistakes That Skew Your AI Brand Monitoring Data

Getting the setup right is half the job. Interpreting the data correctly is the other half.

Mistake 1: Ignoring training cutoffs. Most LLMs don't operate in real-time. If your monitoring tool is querying a model with a 2023 knowledge cutoff, the data reflects a version of your brand that predates your latest product launch, PR campaign, or content push. Without real-time grounding (Retrieval-Augmented Generation), your visibility data is historical, not current.

Mistake 2: Publishing low-fact-density content. AI models measure what researchers call "Explanatory Efficiency." Content buried under repetitive SEO filler or anecdotal preamble gets deprioritized. The model is mathematically less likely to cite a page that forces it to parse through unnecessary text to find a single fact.

Mistake 3: Monitoring from a single location. AI responses aren't uniform across geographies. Running all your queries from one server introduces "datacenter bias," missing regional variations in how your brand is recommended to users in different markets. Accurate monitoring requires residential IPs that simulate local user behavior across your target regions.

Mistake 4: Spawning thin AI-generated content at scale. Flooding the web with low-quality pages to increase citation surface area doesn't work anymore. Modern generative search engines penalize low "Information Gain" content. If your page is a rephrased version of existing results, the AI will cite the original source, and your content won't appear at all.

What a Real AI Brand Monitoring Strategy Looks Like

A mature AI brand monitoring strategy isn't a one-time audit. It's a closed loop: measure, identify gaps, execute content and distribution changes, measure again.

The framework maps to your sales funnel. For a B2B SaaS brand, that might look like:

Awareness prompts: "How to improve team productivity across time zones" (no brand intent yet)

Consideration prompts: "Best Agile tools for remote teams" (category-level, high competition)

Decision prompts: "Is [your brand] secure enough for enterprise?" (direct validation queries)

Each layer requires different optimization. Awareness requires high-quality educational content that AI can cite. Consideration requires structured comparison pages with FAQPage schema. Decision requires trust signals: certifications, case studies, third-party reviews.

Here's a concrete example of the loop in action. A project management tool was invisible in ChatGPT for the prompt "Best Agile tools for remote teams." A gap analysis showed that competitors were cited because they had dedicated use-case pages and active Reddit threads. The team built a structured "Agile for Remote Teams" guide with answer-first headings and comparison tables, then added FAQPage schema and seeded relevant Reddit discussions. Using Topify to track visibility rate for that specific prompt cluster, the brand went from 0% to 65% visibility within 60 days.

That's not a marketing number. That's a measurable shift in how AI recommends the brand to buyers.

Tools and Pricing: What You'll Actually Need to Run This

The right tool depends on your scale and how deep you need to go.

Tool | Core Strength | Starting Price | Best For |

|---|---|---|---|

Full-stack GEO analytics + AI agent execution | $99/mo | Marketing teams, agencies, SaaS brands | |

Profound AI | Enterprise compliance (SOC 2) | $499/mo | Fortune 500 brands |

SE Ranking | Integrated SEO + AI tracking | $103/mo | Agencies and SEO teams |

Otterly AI | Quick visibility pulse checks | $29/mo | Startups and SMBs |

For most marketing teams and agencies managing multiple brands, Topify is the most complete option available right now.

It tracks across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major platforms. That global coverage matters: AI recommendations vary by platform, and a brand that dominates in ChatGPT may be invisible in Perplexity's sourcing logic.

The platform's seven core metrics (visibility, sentiment, position, volume, mentions, intent, and CVR) cover the full picture, not just the easy-to-measure surface. Its Source Analytics feature identifies exactly which external domains AI is currently citing to validate your brand, giving you a direct "citation wish list" for your distribution strategy. And the Prominence Score (0–10) goes beyond simple mention tracking to quantify how recommendable you actually appear in synthesized responses.

On pricing: the Basic plan at $99/mo covers 100 tracked prompts, 9,000 AI answer analyses per month, 4 projects, and tracking across ChatGPT, Perplexity, and AI Overviews. That's workable for a single brand or a small agency. The Pro plan at $199/mo scales to 250 prompts and 22,500 analyses across 8 projects, which is where multi-brand teams or agencies managing 3–5 clients tend to land. Enterprise starts at $499/mo with a dedicated account manager and custom configurations.

It's worth noting that Topify is built by a team with serious technical depth: founding researchers from OpenAI and practitioners who've scaled organic traffic from zero to 1M. That background shows in the platform's approach to data accuracy, particularly around groundedness tracking and how it handles the volatility of AI responses changing up to 70% of the time across sessions.

Conclusion

AI brand monitoring tracking isn't a future concern. It's already the mechanism through which millions of purchase decisions get made every day, and most brand teams are flying completely blind.

The shift from keyword rankings to prompt visibility is permanent. Users are increasingly relying on AI assistants to shortcut the research process, and brands that aren't actively measuring and optimizing their presence in those answers are ceding ground to competitors who are.

Start with a baseline audit. Fix technical access. Build citation coverage. Then deploy a platform like Topify to make the measurement continuous, not periodic. The brands that win AI visibility in 2026 won't be the ones with the biggest ad budgets. They'll be the ones who started tracking this earlier than everyone else.

FAQ

What is AI brand monitoring tracking? AI brand monitoring tracking is the practice of measuring how large language models (LLMs) such as ChatGPT, Gemini, and Perplexity represent, mention, and recommend your brand in response to user prompts. It differs from traditional brand monitoring in that it tracks synthesized AI responses rather than indexed web mentions.

How does AI brand monitoring tracking work? It involves submitting a set of predefined prompts to multiple AI platforms, capturing the generated responses, and analyzing them for brand presence, sentiment, position, and citation sources. Specialized platforms automate this process and normalize the data into trackable metrics.

How do I measure AI brand monitoring tracking? The five core metrics are: Visibility Rate (how often your brand appears), Sentiment Score (how the AI characterizes your brand), Position (where you appear in ranked responses), Citation Share (which domains AI cites to validate your mention), and AI Search Volume (how frequently users are querying AI about your category).

What are common mistakes in AI brand monitoring tracking? The most frequent errors include ignoring LLM training cutoffs, publishing low-fact-density content that AI deprioritizes, monitoring from a single centralized server location, and over-relying on thin AI-generated content that lacks original information.

How much does AI brand monitoring tracking cost? Pricing ranges from around $29/mo for basic visibility pulse checks (Otterly AI) to $99–$199/mo for full-stack platforms like Topify, up to $499/mo+ for enterprise solutions with compliance features. The right tier depends on the number of brands, prompts, and platforms you need to track.