Here's a scenario that's becoming routine for enterprise marketing teams this year. You hold the number-one organic ranking for your primary industry keyword. Traffic is flat. Leads are down. And a competitor with a fraction of your domain authority keeps showing up in ChatGPT and Perplexity whenever a buyer asks for a recommendation.

This isn't a fluke. It's a structural shift. And it's happening faster than most teams have adjusted for.

The Great Decoupling: AI Search Visibility No Longer Follows Your Rankings

Traditional SEO operated on one core assumption: rank high, get clicks. That assumption is breaking down.

In 2025, approximately 60% of Google searches ended without a single click. On mobile, that figure climbed to 77%. AI Overviews now reach 2 billion monthly users, yet only 8% of those users click through to the cited links beneath them. Your site can hold position one and still be commercially invisible.

That's the gap most brands still haven't measured.

The mechanism behind this shift is what researchers call the "Great Decoupling": a structural divergence between where you rank and where AI sends qualified traffic. Google's September 2025 "num=100" update, which capped standard result pages at ten links, accelerated this further. It forced a reported 77% of monitored sites to experience perceived visibility losses, as SEO tools lost the ability to track rankings beyond the first page.

The strategic implication is counterintuitive. AI search traffic, while lower in raw session volume, converts at up to 4.4 times higher than traditional organic traffic. The AI assistant pre-qualifies the user before sending them to you. A lower-volume, AI-referred visitor is often worth more than ten organic clicks from a generic SERP.

Search Environment | Primary Metric | Traffic Mechanism | Conversion Intent |

|---|---|---|---|

Traditional SERP | Ranking Position (1-10) | Direct Click-Through | Low to Medium |

AI Answer Engine | Citation Frequency Rate | High-Intent Referral | High (Qualified Lead) |

Zero-Click Result | Impression Share | No Direct Referral | Awareness Only |

Conversational Mode | Recommendation Share | Interface-Bound | Brand Association |

The brands that understand this table are already rebuilding their strategies around it.

What AI Search Optimization Actually Means (It's Not Just GEO)

There's real confusion in the market right now between GEO, AEO, AI SEO, and AI search optimization. These terms are often used interchangeably, but they point to different layers of the same challenge.

Generative Engine Optimization (GEO) focuses on being cited in AI-generated answers. Answer Engine Optimization (AEO) focuses on answering specific questions accurately. AI search optimization, as a broader practice, covers the full pipeline: being discovered, accurately represented, and recommended across ChatGPT, Gemini, Perplexity, DeepSeek, and the growing ecosystem of vertical AI agents.

The core technical framework underneath all of this is RAG, Retrieval-Augmented Generation. When a user asks ChatGPT a question, the model queries a live web index, retrieves candidate documents, re-ranks them for information quality, and synthesizes a response. Your goal is to be selected during that re-ranking phase.

RAG Pipeline Stage | Optimization Objective | Key Signal |

|---|---|---|

Intent Extraction | Conceptual Alignment | Semantic context and entity tagging |

Document Retrieval | Vector Similarity | High-density topical clusters |

Re-ranking | Information Gain | Unique data points and expert views |

Synthesis | Structural Confidence | BLUF (Bottom Line Up Front) formatting |

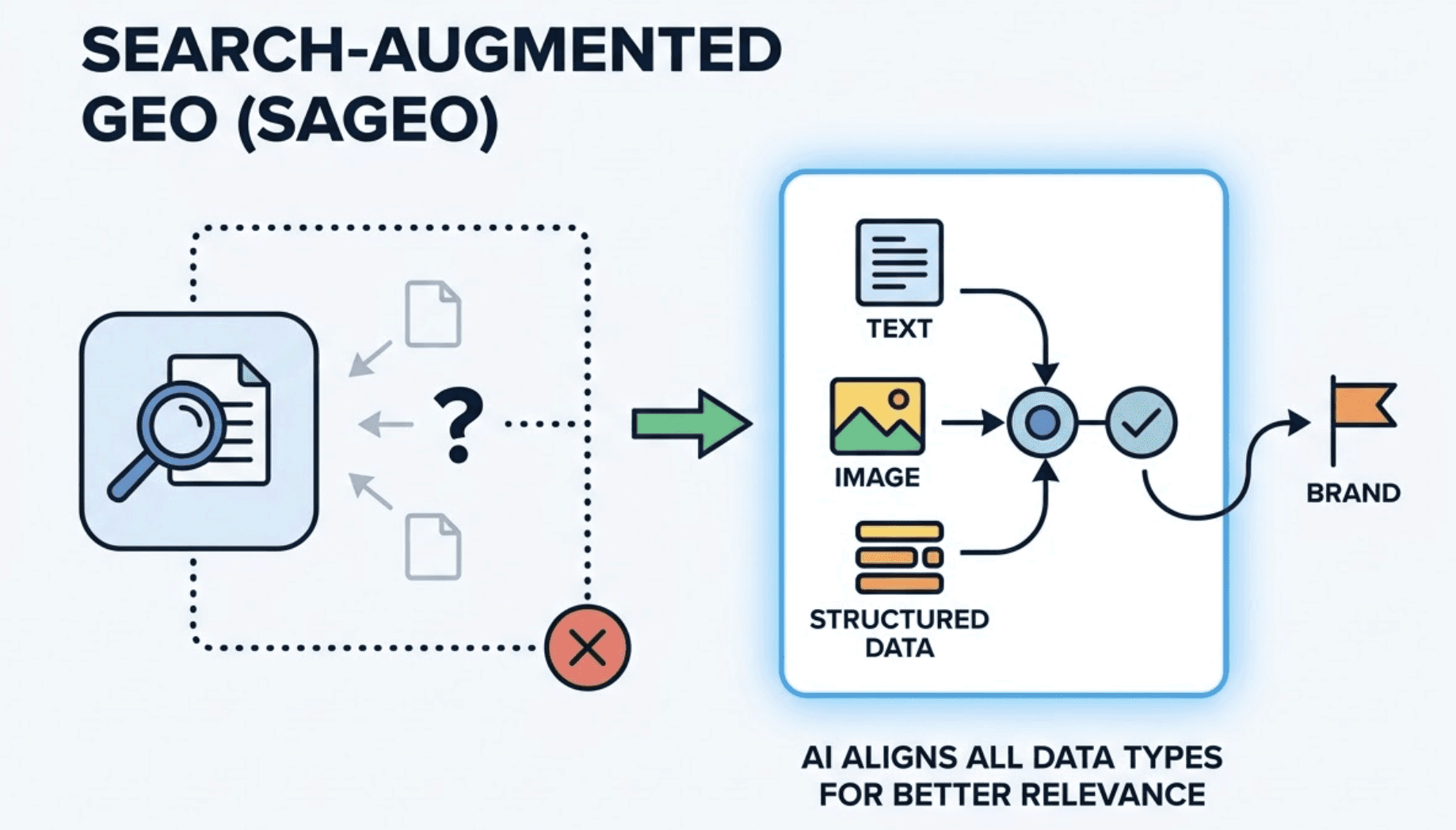

A significant evolution in 2026 is the rise of Search-Augmented GEO (SAGEO), which addresses why specific documents fail to be cited even when the brand is well-known. Even visual-heavy platforms like Pinterest are adopting reverse search designs, using Vision-Language Models to align image data with synthesized answers. AI search intelligence now spans text, image, and structured data in ways that traditional SEO tools simply weren't built to track.

The bottom line: if your AI SEO strategy is just "republish your best-ranking blog posts in FAQ format," you're solving for the wrong layer.

Why AI Keeps Getting Your Brand Wrong: The Hallucination Risk Most Teams Ignore

Approximately 35% of brands report that inaccurate AI responses have damaged their public image in 2026. This is not an edge case.

Hallucinations occur when an AI encounters conflicting or insufficient information about a brand and generates a "plausible" answer rather than admitting a knowledge gap. Common errors include misattributed executive leadership, incorrect founding histories, phantom product features, and the promotion of discontinued pricing. The legal industry saw cases where LLMs cited entirely non-existent court cases. The Australian government was forced to refund consultancy fees after a major firm's report contained fabricated academic citations.

For brands, the consequences tend to be subtler but equally damaging.

If an AI quotes a higher price for your service than what you charge, you appear expensive or deceptive. If it attributes a competitor's features to your platform, you create false expectations. These errors compound over time because LLMs rely on community-driven sources like Reddit and Quora to understand real-world sentiment. If the prevailing narrative on those platforms is outdated or inaccurate, the model treats it as established fact.

Risk Factor | Mechanism of Error | Brand Consequence |

|---|---|---|

Data Voids | Lack of authoritative facts on core topics | AI fills gaps with plausible fabrications |

Entity Noise | Inconsistent data across web profiles | Confusion with similarly named entities |

Outdated Sources | Reliance on cached, historical training data | Promotion of discontinued products or pricing |

Source Conflict | Contradictory claims in third-party reviews | Erosion of trust and authority signals |

This is where hallucination detection becomes a non-negotiable capability. Topify includes structured hallucination detection as part of its AI search intelligence suite, flagging when AI platforms are generating inaccurate representations of your brand across ChatGPT, Gemini, Perplexity, and others. It also uses vector-based semantic drift detection to identify when an AI's understanding of your brand begins to deviate from your intended positioning before the damage compounds.

Most brands discover hallucination problems reactively, after a sales rep gets a question they can't explain or a support ticket references a product feature that doesn't exist. Detection needs to be proactive.

5 Signals That Determine Your AI Brand Visibility Score

AI search visibility isn't random. It's determined by five measurable signals, and each one has a clear intervention path.

1. Entity Strength and Machine-Readable Consistency

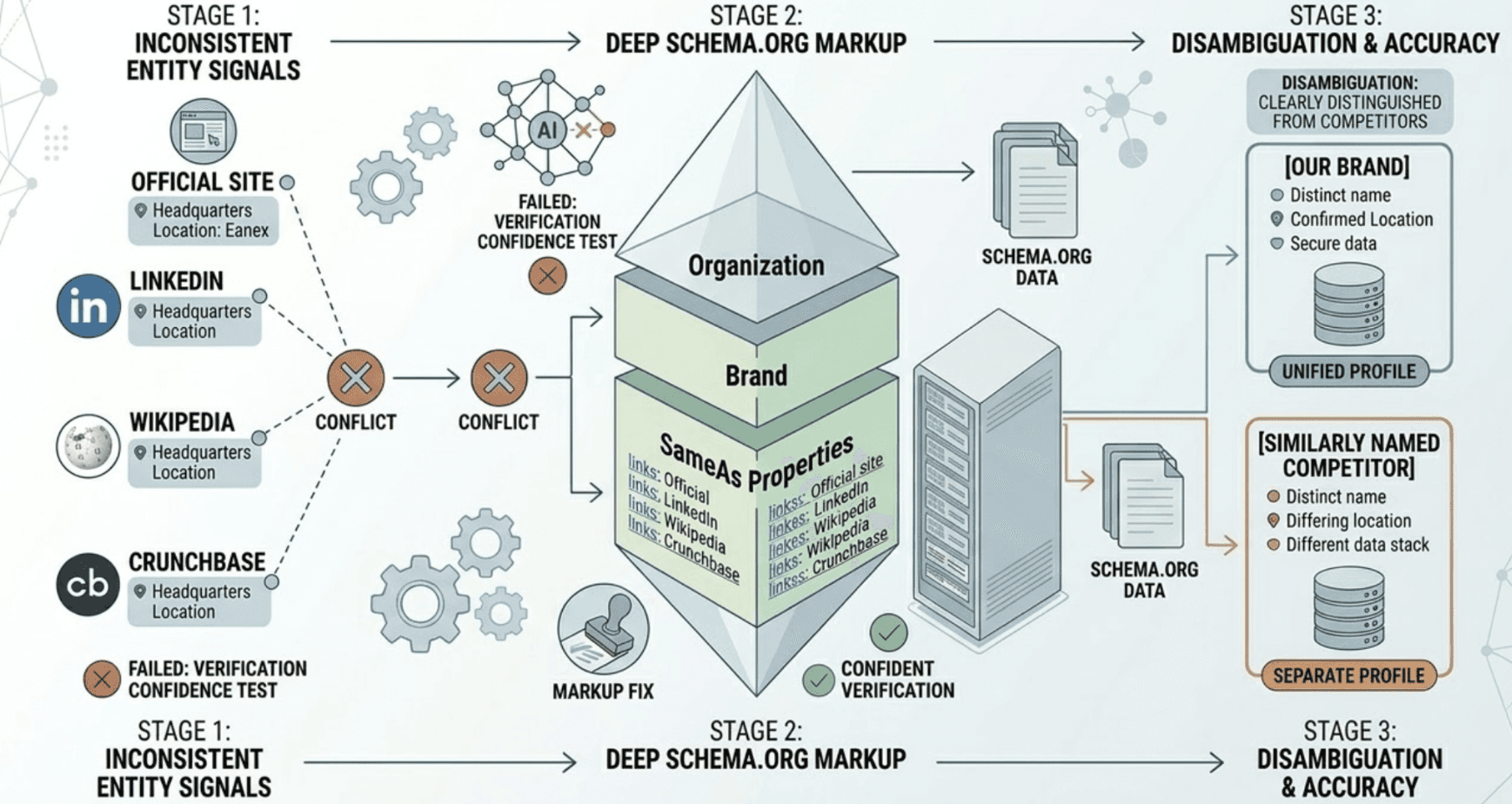

AI models navigate the web as a network of interconnected entities. If your company's legal name, headquarters location, or founding date appears inconsistently across your official site, LinkedIn, Wikipedia, and Crunchbase, you fail what researchers call the "Verification Confidence" test. Deep Schema.org markup using Organization, Brand, and SameAs properties is the primary fix. Without this, models can't disambiguate you from similarly named competitors.

2. Authority Through Third-Party Citations

In AI search, unlinked mentions in authoritative third-party environments function as the new backlink. Mentions in industry media, expert roundups, and high-authority forums carry significant weight. Research shows that sites with over 32,000 referring domains see their AI citation frequency nearly double. Broad, authoritative digital presence is a prerequisite for visibility in models like ChatGPT. It's not enough to have a clean website.

3. Structural Extractability: The BLUF Model

The "Structural Confidence" of a document refers to how easily a RAG pipeline can extract a clean answer from it. Content following the Bottom Line Up Front (BLUF) model, where the most important information appears in the first 30% of the text, is significantly more likely to be cited. Research indicates that 44.2% of AI citations come from that opening section alone. H2/H3 hierarchies, bulleted lists, and tables help the model chunk and verify specific passages without processing the entire document.

4. Semantic Density and Information Gain

Generative engines reward subject depth over volume. To earn a citation, a document must add unique data points, statistics, or expert insights that aren't present in competing sources. Content with three or more verifiable data points per section is 2.5 times more likely to be cited. Thin, persuasive marketing copy doesn't get retrieved. Evidence-backed factual content does.

5. Technical Performance as a Gating Factor

Infrastructure performance directly affects AI citation rates. Analysis of AI-cited sites reveals that 85% pass all three Core Web Vitals. An LCP greater than 4 seconds makes a site 72% less likely to be cited. A CLS greater than 0.25 leads to a 68% decrease in citation probability. AI crawlers prioritize resource-efficient sites with clean HTML over JavaScript-heavy pages.

Visibility Signal | Critical Benchmark | Citation Impact |

|---|---|---|

Entity Consistency | 100% match across top 5 profiles | Required for Verification Confidence |

Citation Frequency | 15-30% share of core queries | Establishes category leadership |

Information Gain | 3+ data points per 150-word chunk | 2.5x higher citation likelihood |

LCP (Performance) | < 2.5 seconds | 72% penalty if > 4.0s |

CLS (Performance) | < 0.1 | 68% penalty if > 0.25 |

How to Build an AI Search Optimization Workflow That Actually Runs

Most teams treat AI search optimization as a one-time content audit. That's why they don't see sustained results.

Phase 1: Build Your Prompt Library and Establish Baseline AI Search Analytics

Start with 20-30 prompts that map the core stages of your buyer's journey: category discovery, product comparison, and implementation questions. Run these prompts across ChatGPT, Perplexity, Gemini, and Claude. This gives you a baseline "AI Answer Presence Rate" and "Citation Share" for each platform. You'll immediately see where competitors are receiving stronger endorsements and where your brand is absent from the conversation entirely.

Phase 2: Entity Grounding

Publish a machine-readable source of truth. This means creating a /brand-facts.json dataset on your official domain using JSON-LD format, with verified details on headquarters, founders, pricing, and core product features. Audit your Google Knowledge Graph entry. Ensure your brand profile is consistent across G2, Crunchbase, LinkedIn, and major industry directories. This is the single highest-leverage step for reducing hallucination frequency.

Phase 3: Dark Query Research and Content Gap Analysis

A "Dark Query" is an internal sub-query generated by an AI platform to answer a user's prompt. A question like "best project management tools" may trigger dark queries for "asynchronous collaboration features" or "security compliance in task managers." The Multi-Platform Fan-Out methodology simulates these sub-queries to surface content gaps. You then create specific extractable content chunks, typically in the form of pillar pages or detailed FAQs, that satisfy these underlying intents.

Phase 4: Ongoing Monitoring and Semantic Drift Detection

AI responses are non-deterministic. They shift as models update and new data enters the index. A monthly monitoring cadence at minimum is required to track sentiment polarity, mention quality, and citation share across platforms. This is where Topify's AI search analytics provides a structural advantage: it runs continuous prompt monitoring across seven key metrics, including visibility rate, sentiment, position, AI search volume, mentions, intent alignment, and conversion visibility rate (CVR), automatically surfacing when performance shifts require action.

Workflow Stage | Primary Action | Documentation Needed |

|---|---|---|

Audit | Run 30 core prompts across 4 platforms | AI Visibility Baseline Report |

Grounding | Implement /brand-facts.json and deep schema | Entity Verification Log |

Strategy | Fan-out sub-queries to find Dark Queries | Implementation Roadmap |

Optimization | Rewrite opening 30% of top pages for BLUF | Content Architecture Guidelines |

Monitoring | Track sentiment and citation monthly | Performance Dashboard |

What a Proper AI Visibility Platform Should Give You

Not all tools in this category deliver the same depth. Here's what separates a professional-grade AI visibility platform from a basic prompt scraper.

A robust platform should provide Agent Analytics: the ability to identify which AI crawlers are visiting your site, what content they're prioritizing, and how that maps to your citation performance. It should offer Model Consensus Scoring, which tells you whether different LLMs agree on the core facts about your brand. If ChatGPT represents you positively while Gemini flags a concern, the platform must surface that discrepancy before it becomes a brand issue.

It should bridge the gap between data and action.

Most tools stop at reporting. Topify takes a different approach with its One-Click Agent Execution model: you define your goals in plain language, review the proposed GEO strategy, and deploy with a single click. The platform handles the execution, covering content recommendations, source gap analysis, and competitive positioning, without requiring manual workflows at every step. It's designed for marketing teams that need to see results, not just dashboards.

Topify also covers the widest platform footprint in its category: ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and others, built on a GEO algorithm developed by founding researchers from OpenAI and champion Google SEO practitioners. The Basic plan starts at $99/month with a 30-day trial, tracking 100 prompts across 9,000 AI answer analyses.

For teams managing multiple clients or brands, the Pro plan at $199/month scales to 250 prompts and 10 seats, making it a practical option for agencies building GEO services into their offering.

Conclusion

AI search optimization in 2026 isn't a future-proofing exercise. It's already determining which brands get found during high-intent research moments and which ones don't. SEO rankings still matter for navigational queries. They've become a secondary signal for everything else.

The brands that will hold market position in the next few years are those treating AI agents as their most important "users": optimizing for machine-readable structure, factual accuracy, and citation authority rather than keyword density and link volume.

The workflows exist. The data benchmarks are clear. The platforms are built for this. The only variable left is whether your team starts the audit this month or six months from now.

If you're ready to see where your brand stands in AI search today, Topify offers a 30-day trial on its Basic plan with no commitment required.

FAQ

What's the difference between GEO and AI search optimization? GEO (Generative Engine Optimization) specifically targets being cited in AI-generated answers. AI search optimization is a broader practice that also includes entity grounding, hallucination detection, cross-platform AI search analytics, and AI search visibility scoring. GEO is one component of a larger AI search optimization strategy.

What are the most important signals for AI brand visibility? The five core signals are entity consistency, third-party citation authority, structural extractability (BLUF formatting), semantic information density, and technical performance (Core Web Vitals). Poor performance on any one of these can significantly reduce your AI citation rate, even if your content quality is strong.

How does AI search optimization GEO hallucination detection work in practice? Hallucination detection involves running a structured set of prompts about your brand across major AI platforms and comparing the AI's outputs against a verified brand-facts dataset. Discrepancies, including wrong pricing, outdated product features, or incorrect company details, are flagged for correction. The correction process typically involves publishing authoritative source-of-truth content and reinforcing entity signals through Schema.org markup. Platforms like Topify automate this monitoring cycle on an ongoing basis.

How often should I monitor my AI search visibility? Monthly monitoring is the baseline for most brands. For brands in fast-moving competitive categories, or those who have recently made major changes to pricing, products, or leadership, a weekly cadence is more appropriate. AI responses are non-deterministic and can shift as models update, making continuous monitoring more valuable than point-in-time audits.

Does technical performance really affect AI citations? Yes, directly. Research shows that 85% of AI-cited sites pass all three Core Web Vitals. Sites with an LCP greater than 4 seconds are 72% less likely to be cited. A CLS greater than 0.25 reduces citation probability by 68%. AI crawlers prioritize fast, clean, resource-efficient sites.