Your domain authority is solid. Your keyword rankings are holding. Then someone on your team runs a category-level query through ChatGPT — "best [your product type]" — and your brand isn't in the answer. A competitor you've outranked on Google for two years gets the recommendation instead.

That gap isn't a content quality problem. Traditional SEO metrics can't explain it because they weren't designed to measure AI search visibility. And the longer that blind spot goes unaddressed, the more decision-stage buyers you're losing to brands that aren't necessarily better — just more visible to the models doing the recommending.

Your SEO Score Doesn't Tell You If ChatGPT Knows You Exist

In mid-2025, roughly 76% of pages ranked in Google's top 10 also appeared in AI Overviews. That overlap has since dropped to between 17% and 38% following major model updates in early 2026. Being #1 on Google no longer has much to do with whether an AI recommends you.

The underlying reason is what researchers call "query fan-out." When a user asks an AI a question, the model silently expands it into a cluster of related sub-queries. It then retrieves content that addresses the full conceptual picture, not just the surface keyword. Today, 36.7% of URLs cited in AI Overviews rank outside the top 100 in traditional organic search. Your on-page keyword optimization has almost no bearing on this process.

This is the structural disconnect that defines AI SEO in 2026.

Traditional metrics measure your authority in Google's retrieval system. AI search runs a different system entirely — one built on semantic relevance, training data saturation, and citation reliability. And right now, 62% of brands are technically invisible to generative AI models, even while actively investing in traditional SEO.

What AI Search Optimization Actually Measures

AI search optimization — also called Generative Engine Optimization (GEO) — isn't a variation of SEO. It's a different measurement discipline that tracks how AI models perceive, describe, and recommend a brand.

The core framework covers seven metrics, each with a direct counterpart in traditional SEO:

Traditional SEO Metric | AI Search Counterpart | Key Difference |

|---|---|---|

Keyword Ranking | Position (Linguistic Order) | AI ranks by recommendation sequence, not keyword match |

Organic Traffic | Volume (Mention Frequency) | AI answers often produce zero clicks; mentions still drive brand decisions |

Domain Authority | Citation Velocity | AI rewards frequency of mentions across trust hubs (news, wikis, industry sources) |

Brand Search Volume | Mentions | Measures how often AI proactively names your brand |

Keyword Density | Semantic Context Depth | Models favor content answering the full question tree, not repeated terms |

Two metrics stand out as especially high-leverage for AI search analytics. Sentiment Score tracks whether AI describes your brand favorably, neutrally, or with caveats. This matters more than most teams expect: 68% of Fortune 500 companies now use AI sentiment tools specifically to catch cases where models quote wrong prices or fabricate feature claims. CVR (Conversion Rate) quantifies the business case directly: referral traffic from ChatGPT converts at 14.2%, compared to 2.8% for traditional organic search.

Only 30% of brands maintain consistent AI search visibility from one model query to the next. That volatility is what makes AI search intelligence a continuous monitoring task, not a one-time audit.

The 4 Pillars of a Working AI Search Optimization Strategy

Getting cited by AI models isn't luck. It follows from a repeatable set of structural decisions. Here's the framework that defines AI brand visibility work in 2026.

Pillar 1: Prompt Intelligence

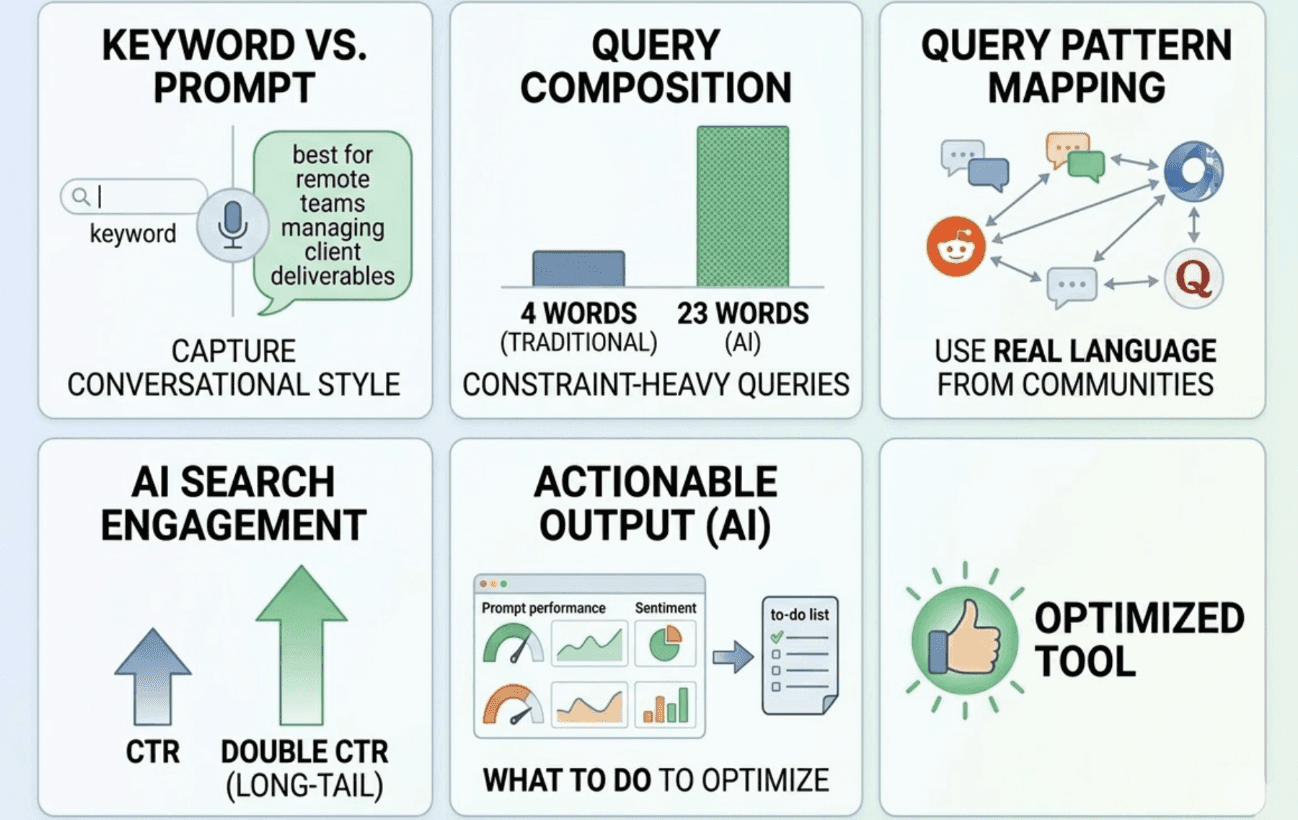

Traditional keyword tools show you what people type into Google. They don't capture how users speak to AI — constraint-heavy, conversational, typically 5+ words long. A user in 2026 doesn't search "project management software." They ask, "What project management tool is best for a remote team of 15 managing client deliverables?"

Prompt intelligence means mapping those actual query patterns using real language from communities like Reddit and Quora. You can't optimize for prompts you haven't identified — and these long-tail, constraint-heavy queries drive double the CTR of single-word terms.

Pillar 2: Source Optimization

AI models are synthesis stacks. To earn citations, content must be structured for extraction.

Three things matter most. First, lead with the answer: 44.2% of citations are pulled from the first 30% of a text, which means your opening paragraph needs to contain the core claim. Second, chunk content into standalone "fact blocks" of 60-120 words that can be pulled out of context without losing meaning. Third, structured data: pages with three or more schema types (Organization, Product, FAQPage) see a 13% higher citation rate than those without.

Pillar 3: Sentiment Management

Being mentioned by AI isn't always good. If a model frames your brand as "a budget option" when you're positioning premium, that framing reaches every user who asks a related question. Sentiment management means monitoring what AI actually says about your brand, catching inaccurate framing early, and publishing enough corroborating content to shift the model's representation over time.

Pillar 4: Competitive Positioning

AI models favor a small set of sources. To break into that set, track "Citation Drift" — the rate at which an AI swaps your brand out for a competitor across consecutive queries. Publishing proprietary data and unique statistics counters this directly, with research showing it improves AI visibility by 30-40%.

How to Track Voice Assistant Responses as Part of Your GEO Strategy

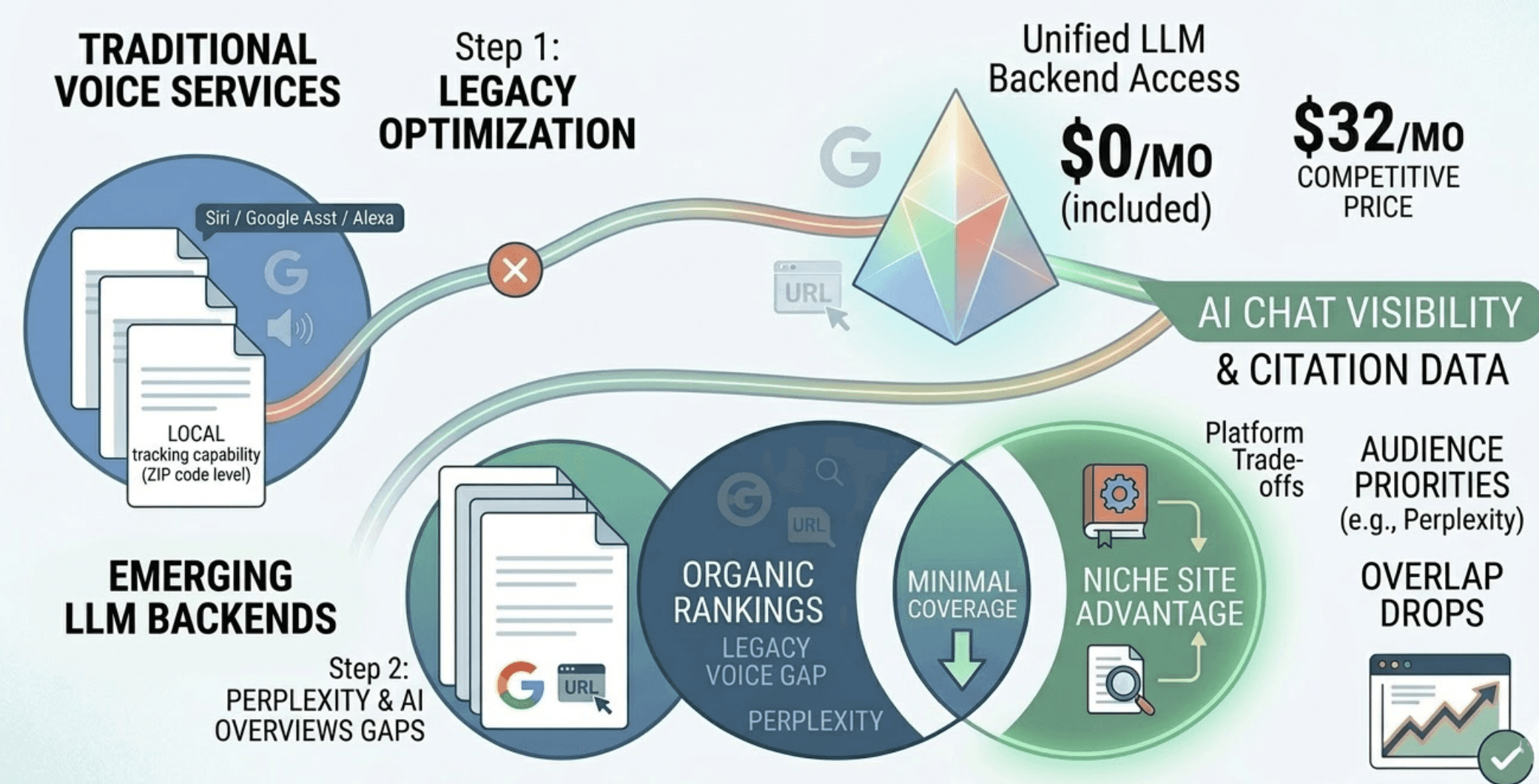

Voice assistants represent the original zero-click interface, and they're now too significant to treat as a separate workstream. There are 8.4 billion voice assistants in use globally in 2026, and the architectural shift that matters is this: Siri, Alexa, and Google Assistant increasingly pull from the same LLM backends as text-based AI search.

That convergence means AI search optimization GEO strategy and voice search strategy are now largely the same strategy.

A few platform-specific details still apply. Google Assistant sources roughly 80% of its answers from the top three organic results and featured snippets. Alexa draws from Bing and Yext. Siri relies on Apple Maps, Apple's Knowledge Graph, and Yelp. Each has different data inputs, which is why tracking voice assistant responses across platforms gives you a cleaner picture of your full AI brand visibility footprint than any single platform view.

The technical requirements for voice SEO/GEO are three: Speakable Schema (marking content for audio delivery), LocalBusiness Schema with NAP data (76% of voice searches are "near me" queries, making structured local data non-negotiable), and page speed (voice search results load 52% faster than standard results, so Core Web Vitals are a floor requirement).

GEO platforms that cover voice assistant response tracking give you a materially more complete view of AI-influenced discovery. It's not a separate track. It's the same discipline extended to a different interface.

Topify's AI Search Analytics: From Data to Action in One Dashboard

Measuring AI search visibility across ChatGPT, Gemini, Perplexity, DeepSeek, and voice assistant backends simultaneously isn't a manual workflow. The data volume and prompt variability make it impractical without dedicated tooling.

Topify is an AI visibility platform built specifically for this problem. The platform tracks 100-250 prompts per brand across major AI engines and measures all seven GEO metrics — visibility, sentiment, position, volume, mentions, intent, and CVR — in a single dashboard. That combination matters because the metrics interact. A brand can have high visibility but negative sentiment, or strong citation volume but poor position, and treating those as separate problems leads to the wrong fixes.

What separates Topify's AI search analytics from simpler monitoring tools is the execution layer.

Once the platform identifies a gap — a missing FAQ, a competitor consistently outranking you in a specific prompt cluster, a schema error suppressing your citation rate — the AI agent can generate and deploy a recommended fix with a single click. No manual workflow required.

Topify also reverse-engineers competitor citations: it shows exactly which third-party domains AI platforms are using as sources when recommending your competitors. That "citation gap" analysis is often the most actionable output for content teams, because it makes the competitive disadvantage specific rather than abstract.

Pricing starts at $99/month on the Basic plan, which includes a 30-day trial, tracking across ChatGPT, Perplexity, and AI Overviews, and up to 100 prompts. Enterprise plans from $499/month add dedicated account management, custom prompt sets, and API access for teams that want to pipe visibility data into internal BI dashboards. Start your trial here.

5 Mistakes That Silently Kill Your AI Search Visibility

Most brands struggling with AI search visibility aren't making obvious errors. They're making structural ones that compound quietly.

Monitoring Google exclusively. Google Search Console shows traffic and clicks. It doesn't show how often an AI model cites your brand without generating a click, or how you rank in Perplexity's recommendation list. You need direct AI platform monitoring to see those signals.

Over-optimizing for keyword density. Modern LLMs treat content that forces keywords into sentences as low-quality. What AI prioritizes is "Information Gain" — original data, unique perspectives, and content that answers the full question. Term repetition is not the signal.

Ignoring competitor citation frequency. Many teams track their own rankings but don't notice when a competitor starts consistently getting recommended as the category leader across AI engines. Citation Drift compounds: once an AI model associates a competitor with authority in your space, displacement becomes progressively harder.

Conflating AI Overviews with total AI search. Google's AI Overviews are one slice of the ecosystem. Millions of research-intent sessions happen daily on ChatGPT and Perplexity, where citation logic and content sourcing work differently. Optimizing only for SGE misses the majority of AI-influenced decisions.

Skipping sentiment monitoring. 81% of leading market providers aren't cited by AI in response to unbranded category prompts. Of those that are cited, many appear with framing they didn't intend. Without sentiment monitoring, your AI brand visibility could be technically positive by frequency while actively costing you conversions.

Conclusion

The gap between traditional SEO performance and AI search visibility is widening. Organic click-through rates for informational queries have dropped 61% since AI Overviews rolled out at scale, while ChatGPT referral traffic converts at five times the organic average. That's not a trend worth waiting on.

The path forward is methodical: audit your current Share of Model across 20-50 category-relevant prompts, restructure your content for extraction with answer-first architecture and structured data, then monitor continuously — because AI citation patterns shift with every model update.

AI search optimization isn't replacing traditional SEO. It's the layer your current framework is missing.

FAQ

Q: What is AI search optimization and how is it different from SEO? A: Traditional SEO focuses on ranking pages to earn clicks from Google's index. AI search optimization (GEO) focuses on ensuring a brand is cited and recommended in AI-generated answers across platforms like ChatGPT, Gemini, and Perplexity. It prioritizes semantic relevance, structured data, and citation velocity over backlink volume and keyword density.

Q: How do I track whether my brand appears in ChatGPT or Perplexity results? A: Standard analytics tools only capture clicks. To track mentions and citations that don't result in clicks, you need an AI search analytics platform that runs prompt simulations across multiple LLMs. Topify covers ChatGPT, Gemini, Perplexity, and DeepSeek from a single dashboard, tracking both branded and unbranded category prompts.

Q: Can I use GEO platforms to track voice assistant responses? A: Yes. AI search optimization GEO platforms are increasingly expanding to cover voice assistant responses from Siri, Alexa, and Google Assistant, since these assistants now draw from the same LLM and search index backends as text-based AI. Tracking voice assistant responses within your SEO/GEO workflow gives you complete visibility into AI-influenced discovery across every interface your audience uses.

Q: What metrics should I prioritize when starting AI search optimization? A: Start with Share of Model (Visibility) to establish whether your brand exists in AI's candidate set for your category, and Sentiment Score to see how the model describes you. As your program matures, add Citation Attribution Rate to confirm AI is linking back to your domain as a primary source.