What “Rank Tracking” Means in Perplexity, Claude, and AI Overviews

Perplexity (RAG + citations)

Perplexity typically cites sources. Tracking here is about:

citation share (which domains/pages are cited)

presence rate (how often your brand appears)

volatility (changes driven by news and fresh content)

Claude (model-first, less citation-driven)

Claude may rely more on training and less on explicit citations. Tracking is about:

entity presence and context accuracy

variance (answers can shift across runs)

Google AI Overviews (trigger-based)

AIO appears only for certain intents. Tracking is about:

trigger rate (when AIO shows)

whether your brand is mentioned/cited

Buying Checklist: What to Look for in Perplexity Rank Tracking Tools

1) Prompt library query expansion

You need long-tail prompts, comparisons, and persona-specific queries—not just a few head terms.

2) Repeat sampling variance smoothing

AI answers vary. Tools should run multiple iterations per prompt and report stable metrics.

3) Citation source attribution (Perplexity core)

A good tool extracts:

cited URLs

domains that dominate citations

competitor overlap (who steals your citations)

4) Normalized metrics

Look for SoV-style metrics (presence rate, weighted mention share), plus sentiment/hallucination checks.

5) Workflow + reporting

Alerts, dashboards, exports, and agency-ready reporting are what make monitoring actionable.

Best Perplexity Search Rank Tracking Tools (2026)

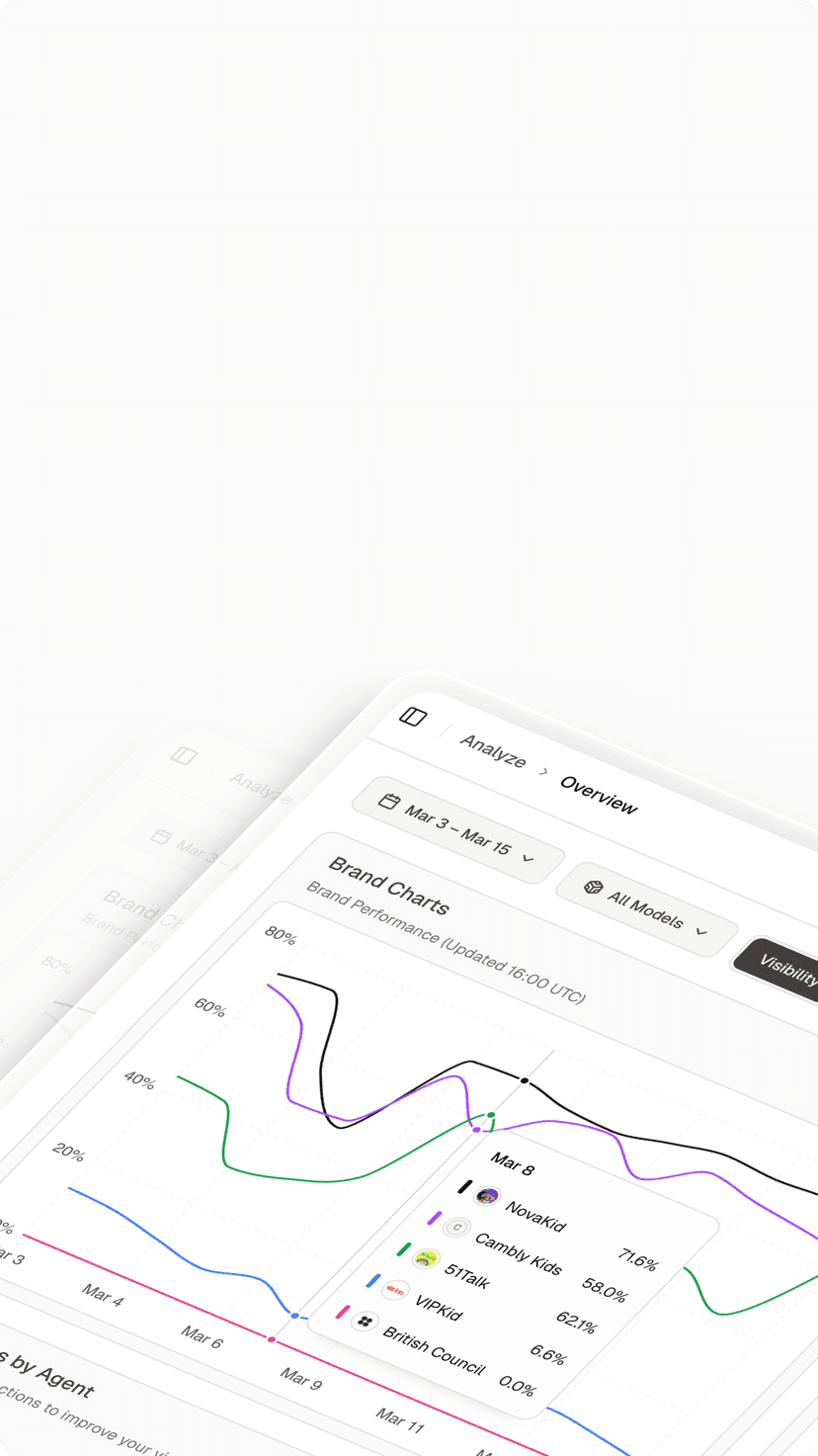

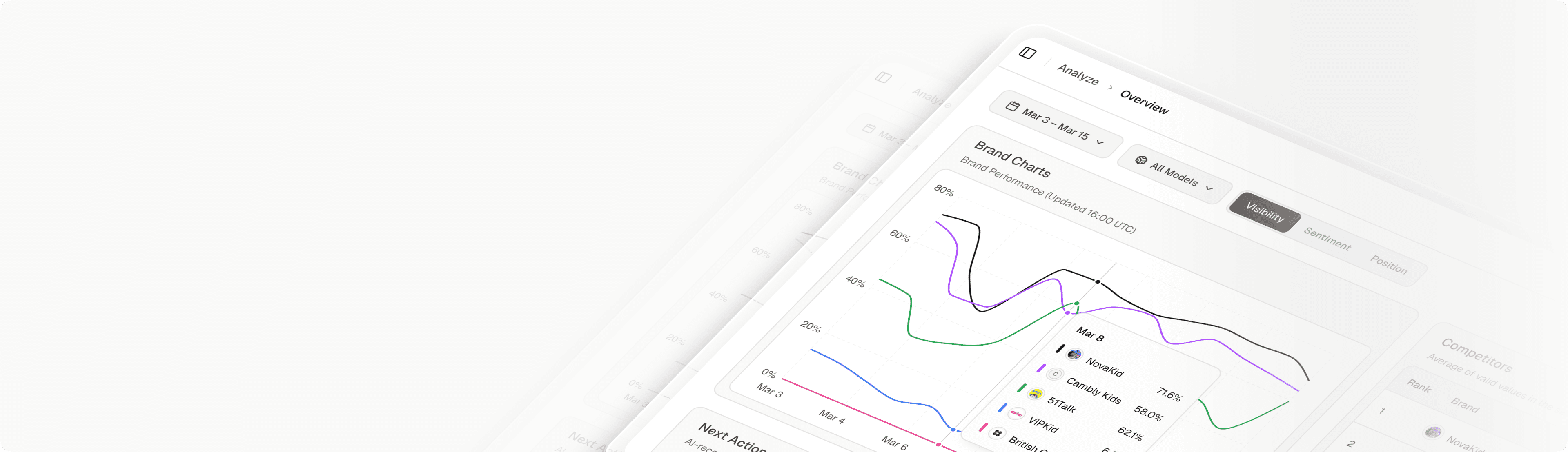

1) Topify (cross-platform AI visibility + monitoring workflows)

Best for: teams that want to track Perplexity, Claude, Gemini, and Google AIO in one system—then turn results into an optimization plan.

2) Profound (historical archive + reporting)

Best for: analytics and reporting-heavy orgs that need long-term trend lines.

3) Specialist tools (narrow scope)

Best for: teams monitoring only one ecosystem and accepting fewer workflow features.

4) DIY baseline (spreadsheets + manual checks)

Best for: small experiments. Breaks at scale due to long-tail coverage and answer variance.

Comparison Table (Quick View)

Capability

Topify

Profound

Specialist tools

Perplexity citation extraction

Varies

Manual

Claude tracking

Varies

Varies

Google AIO trigger monitoring

Limited

Repeat sampling

Varies

Varies

SoV-style metrics

Limited

Workflow alerts reporting

Strong

Basic

Manual

How to Choose (Scenarios)

You need cross-platform visibility + optimization loop → choose a unified AI visibility platform.

You only care about Perplexity citations → pick the strongest citation extraction + reporting.

You’re an agency → prioritize multi-client prompt libraries and fast reporting exports.

Can I use Google Search Console for Perplexity rank tracking?

No. You need tooling that captures answer outputs and citations directly.

What is the fastest win for Perplexity visibility?

Close the citation gap: identify which domains Perplexity cites for your prompts, then earn mentions/citations there and strengthen your own pages for extraction.

Conclusion

Perplexity search rank tracking is less about “positions” and more about presence + citations + context accuracy across AI answers. Choose tooling that can sample consistently, attribute sources, and turn gaps into a weekly workflow.