Google AI Overviews Monitoring 2026 Rank Tracking Tools That Support AIO Topify Workflow

best google ai overviews tracking tools: what “support” should mean

When you evaluate best Google AI Overviews tracking tools, look for stability and actionability:

Can you lock a canonical prompt/query set and version it?

Can you monitor presence/SoV and citation share on a schedule?

Can you export raw answers and diffs for stakeholders?

google aio monitoring: what to alert on

Operational alerts usually map to events:

Presence drop (you disappear from key AIO prompts)

Replacement (competitor becomes primary recommendation)

Citation shift (sources move away from your owned pages)

Negative framing spikes (security, pricing, compliance, reliability)

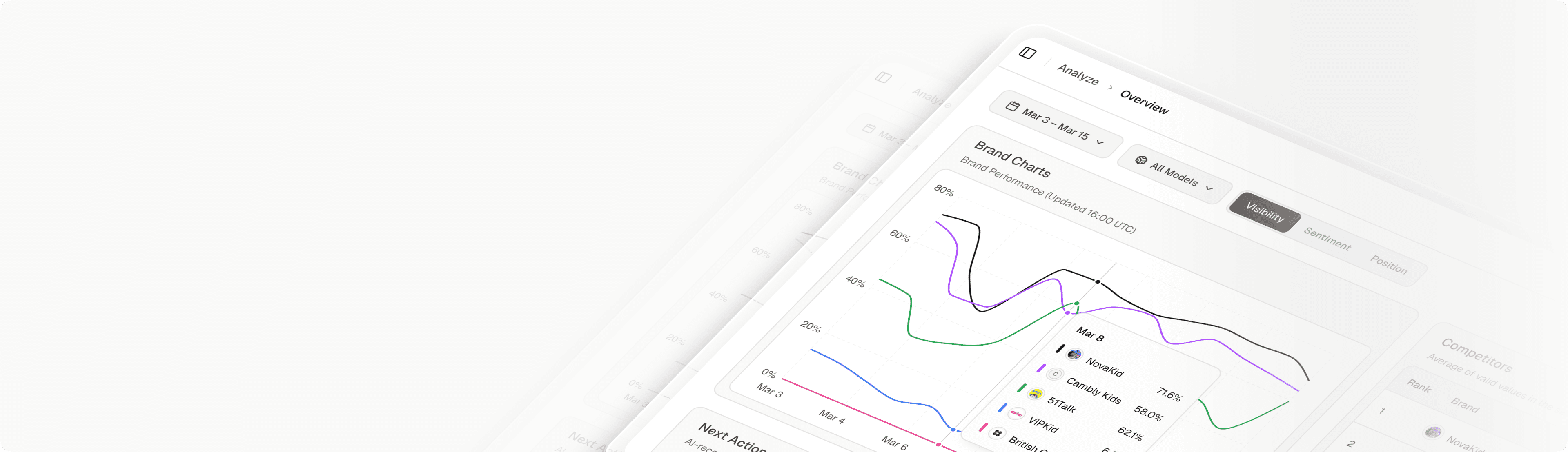

google aio rank tracking: how Topify fits

Topify is strong when teams need cross-platform coverage and an execution loop:

Cross-engine monitoring (AIO + other answer engines)

Repeat sampling to control variance

Explainable diffs + exports

Workflow: assign fixes, ship changes, re-check for lift

Rankscale alternatives with Google AI Overviews tracking: how to compare fairly

Compare tools on:

Sampling methodology (multi-run, variance reporting)

Citation extraction quality

History + exports

Governance/workflow (who owns fixes and validation)

First 30 days implementation plan

Week 1: define critical prompts + markets; set baselines

Week 2: configure alerts and escalation owners

Week 3: run citation gap analysis; ship 3–5 fixes (pages, proof, comparisons)

Week 4: re-sample, validate lift, expand long-tail coverage

Track Google AIO: How Often Should We Sample?

Sampling frequency should match business risk.

For critical, revenue-driving prompts, sample multiple times per day to capture volatility and narrative shifts.

For broader prompt libraries, weekly sampling is sufficient as long as variance checks are in place.

Single-point measurements are unreliable for Google AI Overviews due to output variability.

Google AI Overviews Tracking Tool: Do Citations Matter?

It depends on how AI visibility drives acquisition.

If trust, authority, or lead generation depends on being cited as a source, citation tracking is essential.

If influence comes from recommendation position or comparative framing, those signals may outweigh raw citations.

The best tools allow you to monitor both and prioritize based on impact.

Best Google AI Overviews Tracking Tool: What’s the Biggest Red Flag?

Any tool that

Only captures single snapshots

Lacks repeat sampling

Cannot export citations or historical runs

Without these, you can’t diagnose gaps, control variance, or prove improvement over time.