Google still dominates distribution—but the answer layer is expanding

For most marketers, the daily reality is still that Google drives the bulk of discovery. The shift is not “Google is gone.” The shift is “Google’s surface area has changed.”

AI Overviews and AI Mode expand what counts as a “search result.” Instead of 10 blue links, the top of the page increasingly becomes:

a summary

a set of citations

a short list of recommended entities

sometimes a next-step action

At the same time, conversational engines (ChatGPT-style search, Perplexity-style answers, and others) are growing as parallel discovery surfaces. Even when those tools drive relatively small referral volumes today, they can still shape perception because they sit at the decision-making layer: summarizing, comparing, and recommending.

In other words: the traffic share might look small, but the influence share can be big.

AI Overviews are a click deterrent and we can quantify it

It’s tempting to assume that if you still rank #1, you’re safe. But AI Overviews change the click economy.

Large-scale SERP analysis has shown that when AI Overviews appear, the clickthrough rate to the top-ranking organic result drops materially. This is intuitive: the searcher’s need is partially satisfied before they ever consider clicking.

Two consequences follow:

Ranking doesn’t equal traffic the way it used to.

Even when citations exist, they’re distributed—an answer can cite many sources, which dilutes clicks to any single publisher.

So the goal shifts from “win the click” to “win the mention (and the positioning).”

What gets you mentioned in AI answers is not the same as what got you ranked in SEO

When teams first hear about GEO, the default reaction is to scale traditional SEO inputs:

publish more content

build more links

optimize more pages

But the strongest signals associated with AI visibility are often brand and reputation signals, not pure link metrics.

Across multiple datasets analyzing AI-generated summaries, brand presence correlates strongly with:

how often the brand is mentioned across the web (linked or unlinked)

whether the brand is referenced as anchor text (an intentional, name-level endorsement)

how much branded search demand exists (people explicitly looking for you)

This matches how answer engines behave in practice. When an AI system tries to recommend a product category, it has to decide which entities are “real,” “relevant,” and “safe to suggest.” Broad mention frequency and consistent co-occurrence with category terms become powerful, machine-readable proxies for legitimacy.

A useful way to frame it:

SEO helped you rank for a query. GEO helps you become part of the category’s consensus narrative.

The strongest cross-platform AI visibility signal might be… YouTube

One of the more surprising findings across modern AI visibility research is how consistently YouTube shows up as a strong predictor and/or source:

YouTube is heavily indexed.

YouTube content is language-rich (titles, transcripts, descriptions).

YouTube videos often contain comparative, experiential information that models treat as “evidence.”

This has a practical implication for content strategy: video is not just a distribution channel. In AI search, video becomes part of the knowledge substrate.

For brands that struggle to break into entrenched SERPs, YouTube can also serve as a “side door” into visibility: it creates more textual surfaces where your brand name appears alongside your category, problems, and differentiators.

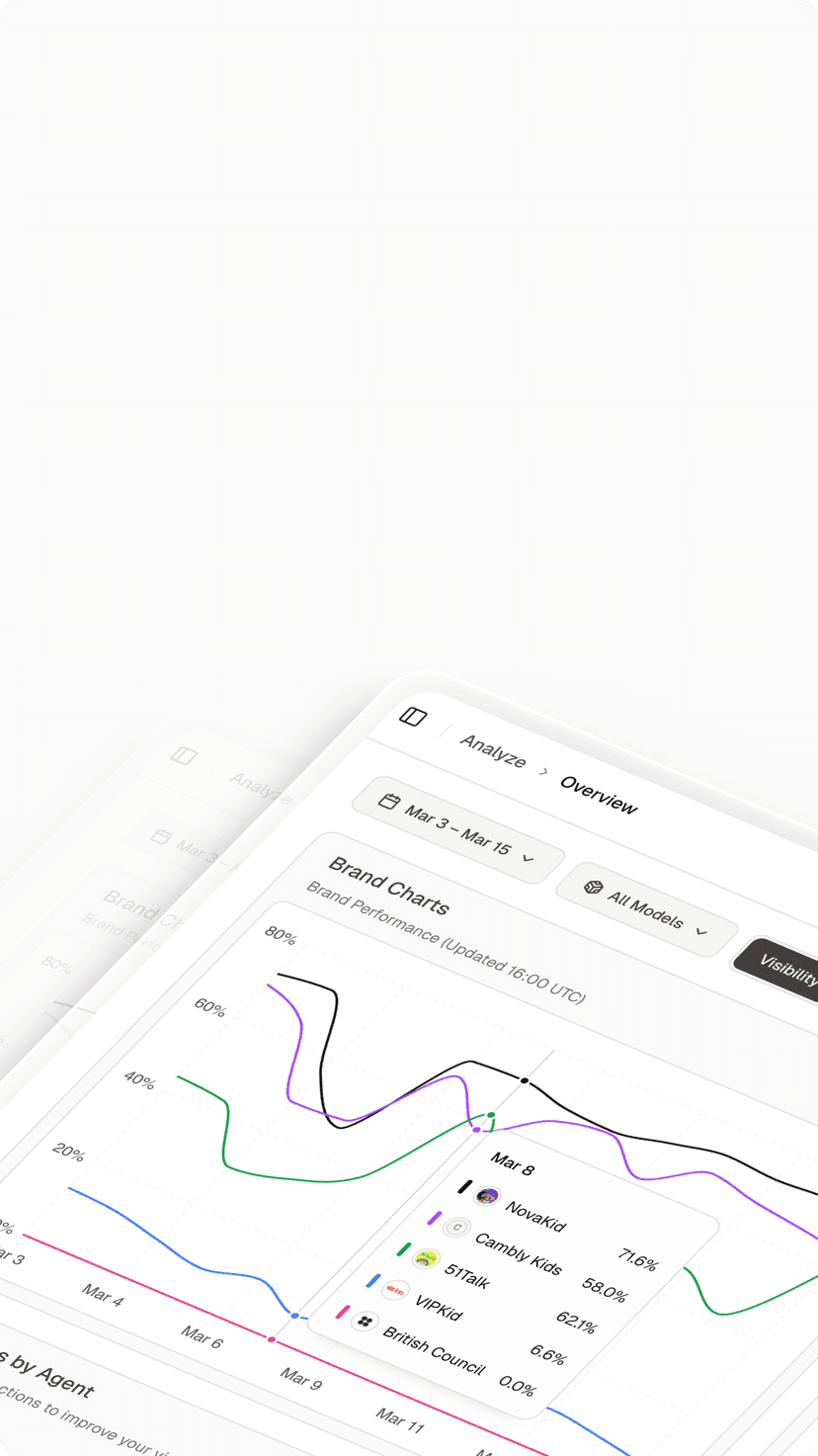

Citation behavior differs by platform

“AI visibility” is not one system. Different platforms cite different sources at different rates.

A few patterns repeatedly show up:

Some assistants lean heavily on encyclopedic or highly consolidated sources for definitions.

Some lean heavily on community sources for real-world experience and sentiment.

Some blend professional networks, Q&A sites, and social platforms.

This explains why two marketers can run the same prompt on different tools and get different “winners.” The underlying retrieval and citation preferences vary.

Practical takeaway: You should not bet everything on one publishing surface. A robust GEO strategy usually includes a mix of:

canonical pages on your own site (definitions, comparisons, guides)

third-party editorial mentions

community participation

video presence

review and analyst ecosystems

For web-connected answers, retrieval engines still matter

When an assistant decides it needs fresh information from the web, it typically retrieves results through a search index, then selects citations from the retrieved set.

That means there’s a “hidden dependency” behind many AI answers: the assistant can only cite what it can find.

In practice, this creates a layered optimization problem:

Get into the retrieval set (classic SEO / index visibility)

Be selected as a source (trust + relevance)

Be extractable (structure)

Be positioned favorably (how the answer frames you)

So GEO doesn’t replace SEO; it wraps around it.

GEO is increasingly a PR + community + product-content systems problem

If brand mentions and off-site references matter, then GEO can’t live solely inside an SEO team.

The inputs that answer engines draw on are produced across multiple functions:

PR and earned media (editorial mentions, interviews, announcements)

community and social (Q&A, discussions, clarifications, real usage narratives)

product marketing (clear positioning, comparisons, differentiated language)

customer support and education (FAQs, troubleshooting, “how it works” explanations)

creators and video pipelines (demonstrations, walkthroughs, reviews)

In other words, a strong GEO program looks less like “keyword optimization” and more like distributed reputation engineering.

Content structure matters: write for extraction, not just reading

Even if your brand is mentioned widely, answer engines still need to extract usable chunks.

A practical rule is:

Make your pages skimmable for humans and extractable for machines.

That usually means:

a short TL;DR that can be lifted directly

a crisp definition block (“X is…”) with no marketing fog

short paragraphs that each express one idea

comparison tables (real tables, not images)

checklists and step-by-step sections

FAQs written as real Q&A pairs

explicit boundaries (“works when… doesn’t work when…”)—this increases trust

This is the part many teams miss: the best GEO content often feels more like documentation than “thought leadership.” It’s opinionated where it should be, but it is also structured, literal, and quotable.

Case signal: LLMs behave like “consensus engines”

A useful mental model is that LLMs often behave like consensus engines. When asked for “best tools” or “top alternatives,” they don’t just pick the brand with the prettiest website—they try to synthesize what the internet broadly agrees on.

That naturally rewards:

clear, factual claims

stable terminology

third-party corroboration

consistent positioning across many sources

It also explains why “one perfect blog post” rarely flips the switch. Visibility tends to improve when your brand narrative is repeated across:

your own canonical pages

reputable editorial coverage

communities and Q&A

video surfaces

reviews and comparisons

A Practical GEO Playbook

In GEO) visibility is no longer earned by publishing more content. It’s earned by becoming the default reference when AI systems generate answers.

This playbook outlines how teams can move from “having content” to owning the answer layer—with concrete steps and measurable outcomes.

1) Choose your answer surfaces

Different engines cite different places. Pick a short list and optimize toward their preferences:

Engine

Primary Use Case

What It Prefers

Google AI Overviews / AI Mode

High-intent search, commercial queries

Structured pages, definitions, statistics

ChatGPT (web vs non-web)

Research, synthesis, explanations

Repeated consensus phrasing across sources

Source-first answers

Verifiable citations, original data

Long-form reasoning

Neutral, analytical, non-marketing language

Operational guidance

Start with 2–3 engines your buyers actually use

Document for each:

Whether links are cited

How often comparisons appear

Whether answers rely on statistics, definitions, or narratives

2) Publish 2–3 canonical pages you want AI to quote

These pages should be written to become “default references,” not fluffy blogs:

What is GEO? (GEO vs SEO)

GEO Guidelines (how to get cited)

AI Search Statistics (only if you can maintain accuracy and update cadence)

Page Type

Purpose

What Makes It Work

What Is GEO? (GEO vs SEO)

Own the definition

Clear definition + comparison table

GEO Guidelines

Become the “how-to” reference

Checklists, frameworks, neutral tone

AI Search Statistics (optional)

Become a data source

Accuracy, citations, update cadence

Key principles

Write for answer extraction, not dwell time

Remove promotional language

Optimize for clarity, repeatability, and neutrality

If an AI needs to explain the topic in 5 sentences, your page should already contain those 5 sentences.

3) Build off-site consensus (not just backlinks)

Prioritize sources where your brand appears in context, not in isolation:

Reputable earned media

Industry publications, research reports, white papers

Relevant communities

Reddit threads, professional forums, Slack or Discord groups

Partnerships & integrations

“X integrates with Y” is highly cite-able language

Reviews & analyst ecosystems

G2, Capterra, analyst notes, even smaller niche reports

The goal is simple:

Make multiple independent sources describe you the same way.

4) Make content extractable

If a page can’t be summarized into:

a 7-bullet TL;DR

a definition block

a table

a checklist

a FAQ

…it’s probably not optimized for the answer layer.

Extractable Element

Present?

5–7 bullet TL;DR

Yes / No

One-sentence definition block

Yes / No

Comparison or data table

Yes / No

Step-by-step checklist

Yes / No

Direct, quotable FAQ

Yes / No

5) Measure the right thing

In a zero-click world, the KPI shift is:

from traffic → to mentions, citations, and positioning

Then connect those leading indicators to lagging outcomes:

branded search lift

direct traffic

pipeline quality

sales-cycle efficiency

Quick Summary

Across engines, formats, and use cases, the pattern is consistent: AI systems favor sources that are clear, repeatable, corroborated, and safe to cite. The practical shift for teams is moving from: publishing content to designing references.

That means choosing where your buyers ask questions, deciding which explanations you want AI to reuse, reinforcing those explanations across independent sources, and structuring everything for easy extraction. When done well, GEO changes how impact is created:

Visibility happens before the click

Influence accumulates across answers, not sessions

Authority is measured by repetition, not reach

In an answers-first ecosystem, the brands that win are not the loudest or the most prolific. They are the ones AI systems return to—again and again—when explaining the category. Owning the answer layer is no longer optional. It’s how modern visibility compounds.

Closing thought

GEO is often described as “optimizing for AI.” The deeper reality is simpler—and more durable.

You are optimizing for how information becomes trusted in an ecosystem where machines read, synthesize, and summarize the web on behalf of users.

AI systems do not reward volume. They reward signals. They look for patterns they can rely on, sources they can return to, and narratives that remain stable across contexts. The brands that win in this environment won’t be the ones publishing the most content. They will be the ones that build a presence that is:

Consistent across sources — the same message reinforced wherever AI looks

Easy to extract — clear structure, explicit claims, minimal ambiguity

Safe to cite — factual, verifiable, and low-risk to reference

Repeatedly corroborated — echoed by documentation, comparisons, and third-party validation

In an answers-first world, visibility is no longer about being seen. It’s about being trusted enough to be repeated. In the answer layer, that is what visibility looks like.