Your brand ranks #1 on Google. You've invested in content, backlinks, and technical SEO for years. But when someone asks ChatGPT "what's the best tool for [your category]," your brand doesn't come up. Or worse, it does — and the description is wrong.

That's the new blind spot most marketing teams don't have a system for yet.

AI answer monitoring is the practice of tracking how AI platforms generate, describe, and position your brand in their responses. It's not a variation of what you already do. It's a different discipline entirely.

AI Answer Monitoring Is Not Your Existing Brand Monitoring Tool

Traditional brand monitoring was built for a different internet. Google Alerts tracks keyword mentions. Social listening tools capture reposts and direct references. Both are designed around a world where content travels as-is, from one page to another.

AI doesn't work that way.

Large Language Models synthesize new descriptions based on their training data and retrieval processes. They don't reprint what your website says. They generate a version of what they "think" about your brand, informed by years of fragmented sources — some current, some outdated, some from forums you haven't read in years.

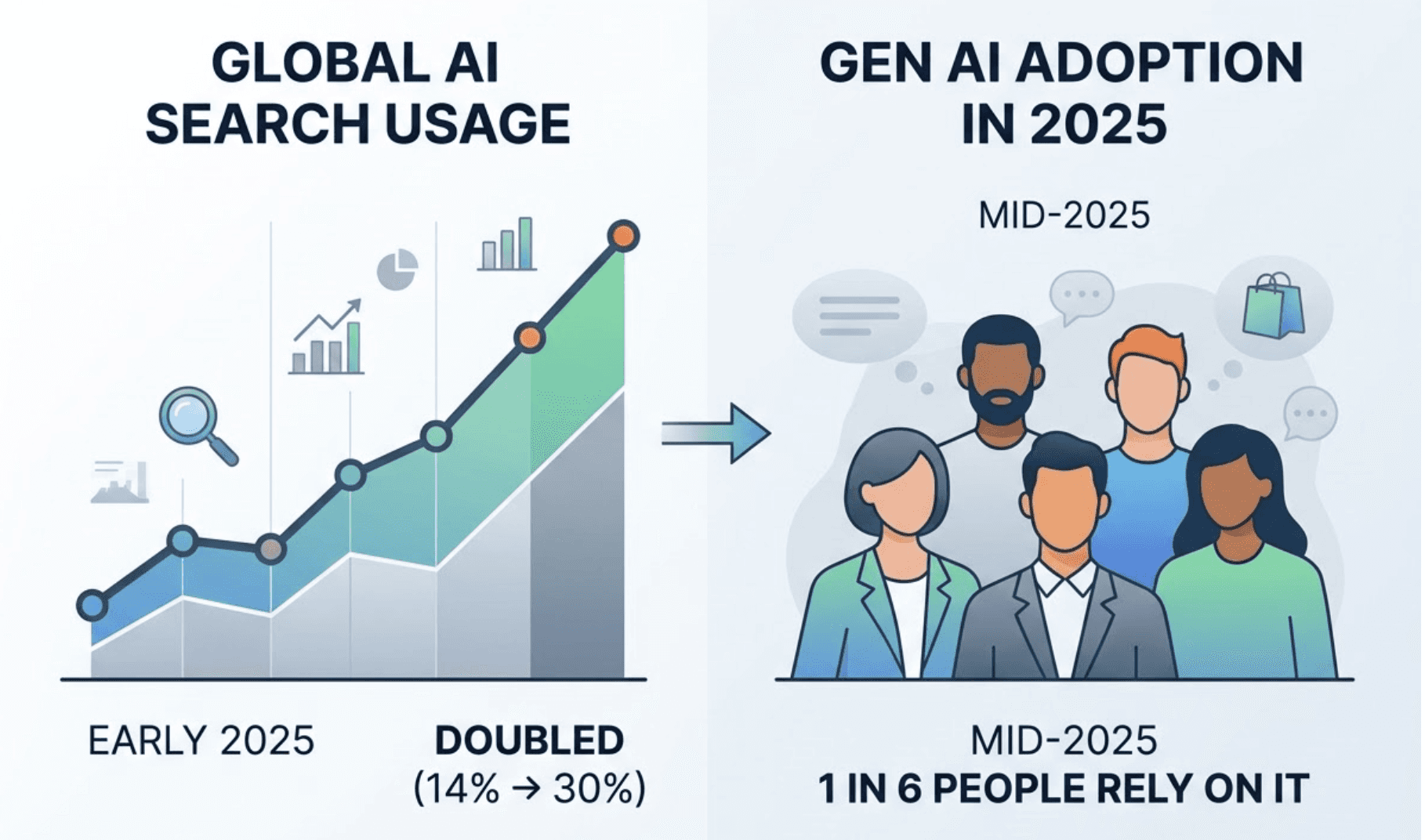

Daily AI usage as a search tool doubled from 14% to nearly 30% in early 2025, while global adoption of generative AI tools reached 16.3% by mid-2025, meaning roughly one in six people worldwide now rely on these systems to learn about products and make decisions.

The gap between traditional and AI monitoring isn't about features. It's about what gets measured:

Feature | Traditional Brand Monitoring | AI Answer Monitoring |

|---|---|---|

Primary mechanism | Keyword crawling of static pages | Generative synthesis and RAG-based retrieval |

Data nature | Direct mentions and literal reprints | Synthesized descriptions and derived sentiment |

Visibility metric | SERP ranking | AI Visibility Score and Mention Rate |

User interaction | Click-through to third-party sites | Zero-click synthesized answers |

Brand authority | Backlinks and domain authority | Citation frequency and narrative alignment |

The practical consequence: traditional tools monitor what is said on the internet. AI answer monitoring tracks what is generated by the internet's most trusted synthesis engines.

What a Real AI Answer Monitoring Dashboard Actually Measures

Most teams start by asking: "Is our brand showing up in AI answers?" That's the wrong first question.

The right question is: "When our brand shows up, what is the AI saying, to whom, and in what context?"

A proper AI answer monitoring analytics layer tracks seven core metrics across every response:

Metric | What It Measures | Why It Matters |

|---|---|---|

Visibility | % of tested prompts containing your brand | Overall presence in generative search |

Sentiment | Positive / Neutral / Negative polarity score | Qualitative reputation in AI-generated answers |

Position | Rank order within a list or paragraph | Likelihood of being the top recommendation |

Volume | Estimated search frequency of the prompt | Market reach of each AI interaction |

Mentions | Absolute count of brand occurrences across sessions | Raw frequency of brand recall |

Intent | Match between your brand and user query goals | Relevance to high-conversion queries |

CVR | Probability a mention drives a website visit or lead | Referral effectiveness of the AI's citation |

Visibility is the baseline. But sentiment is where brands get hurt.

An AI might correctly identify your brand name and then describe your pricing incorrectly, attribute a competitor's features to you, or frame your product as suited for a market segment you don't serve. These aren't edge cases. Research estimates that AI systems fabricate or misframe information up to 27% of the time — and without active monitoring, you won't know it's happening.

The Air Canada case is instructive: an AI hallucinated a $100 ticket discount that the airline was then legally forced to honor. Google lost $100 billion in temporary market cap after a factual error during a live product demo. These aren't just big-brand problems. For any brand being described by AI, the stakes are real.

How AI Answer Monitoring Works Across Different Platforms

There's no such thing as a single "AI answer." Each platform generates its own version.

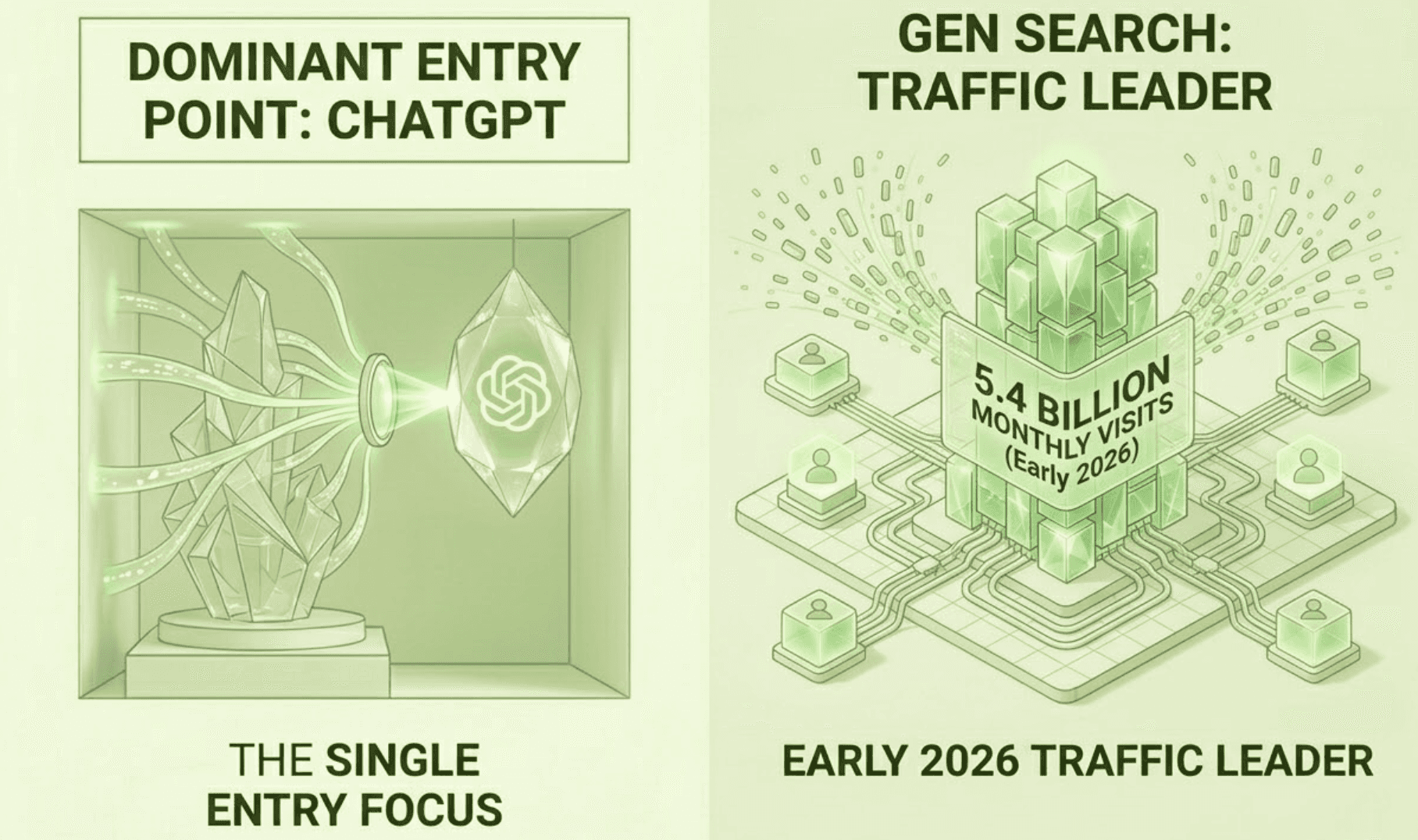

ChatGPT recorded 5.4 billion monthly visits in early 2026, making it the dominant entry point for generative search. Its responses lean heavily on pre-trained knowledge and selective web browsing, which means brands with strong historical presence on Wikipedia, major news outlets, and high-authority industry sites tend to perform better.

Perplexity operates on a different logic entirely. It prioritizes real-time retrieval with sentence-level citations, making it the most measurable platform for tracking citation rates. In the U.S., Perplexity captures close to 20% of AI-driven referral traffic — significantly above its global average.

Gemini integrates directly with Google's index and often favors properties within Google's ecosystem. For brands already investing in Google's structured data formats, this creates a natural advantage.

DeepSeek, Qwen, and Doubao represent a growing class of reasoning-heavy models with lower citation transparency. For B2B and technical brands, these platforms matter more than most teams realize — and they require monitoring at the prompt level rather than through link-tracking.

The bottom line: your AI answer monitoring system needs to cover all of these platforms, not just the one your team uses personally. What ChatGPT says about your brand and what Perplexity says can be meaningfully different. Both are reaching your potential customers.

5 Common Mistakes in AI Answer Monitoring Strategy

Most teams that invest in AI answer monitoring still leave significant gaps. Here's where the strategy breaks down:

Mistake 1: Only monitoring your own brand. AI answers are comparative by nature. When a user asks for "top alternatives to [your competitor]," and your brand doesn't appear, you've lost that customer at the moment of intent. Real AI answer monitoring tracks your position relative to competitors across shared prompt categories.

Mistake 2: Treating visibility as the final metric. High visibility with negative or inaccurate sentiment is worse than low visibility. Queries of eight words or longer have a 57% higher chance of triggering a detailed AI response — and in those longer, more specific answers, sentiment and narrative accuracy carry disproportionate weight.

Mistake 3: Monitoring at the brand level, not the prompt level. Tracking whether "your brand name" appears in AI answers misses the higher-value question: are you appearing when users ask about the specific problems you solve? An AI answer monitoring solution that doesn't map prompt-level performance to your product categories is giving you incomplete data.

Mistake 4: Running monthly reports. AI models update continuously. A viral Reddit thread or a competitor's new press coverage can shift an AI's description of your brand within days. Weekly monitoring is the minimum viable cadence; real-time alerts for sentiment changes are worth the overhead.

Mistake 5: Not closing the loop into content strategy. The purpose of an AI answer monitoring platform isn't to generate reports. It's to tell you which content to create or update so that AI engines start citing your brand as the authoritative source for specific topics. If your dashboard doesn't connect visibility gaps to content actions, you're collecting data without using it.

How to Build a Monitoring Workflow That Actually Drives Action

Knowing the metrics is one thing. Building a repeatable system is another.

Step 1: Define your prompt set. Start with 25 to 50 prompts that map to your customer's information journey — branded questions, categorical research, and comparative queries. These become your baseline.

Step 2: Establish baseline scores. Run your initial AI answer monitoring analytics across all target platforms. Document your Visibility Score and Sentiment Score for each prompt. This is your "before" state.

Step 3: Run source analysis. Which domains is the AI citing when it talks about your category? If a third-party aggregator or a competitor's blog is being cited for facts about your product, "source substitution" has occurred. Your content isn't being read by the AI's retrieval layer.

Step 4: Fix the content gaps. Move high-value product information out of gated PDFs and heavy JavaScript pages. Create clean, bot-readable fact sheets that answer specific questions directly. Structured data and schema markup significantly improve AI crawlability.

Step 5: Set your reporting cadence and escalation triggers. Weekly summaries work for most teams. Add automated alerts for sudden sentiment drops or when a competitor appears in prompts where you previously held the top position.

Topify simplifies this entire workflow. Rather than manually building prompt taxonomies and cross-referencing platform outputs, Topify's prompt discovery engine surfaces high-volume AI queries relevant to your brand automatically. Its one-click execution layer lets teams deploy content strategies directly from dashboard insights — no separate workflow required.

Topify: An AI Answer Monitoring Platform Built for This Problem

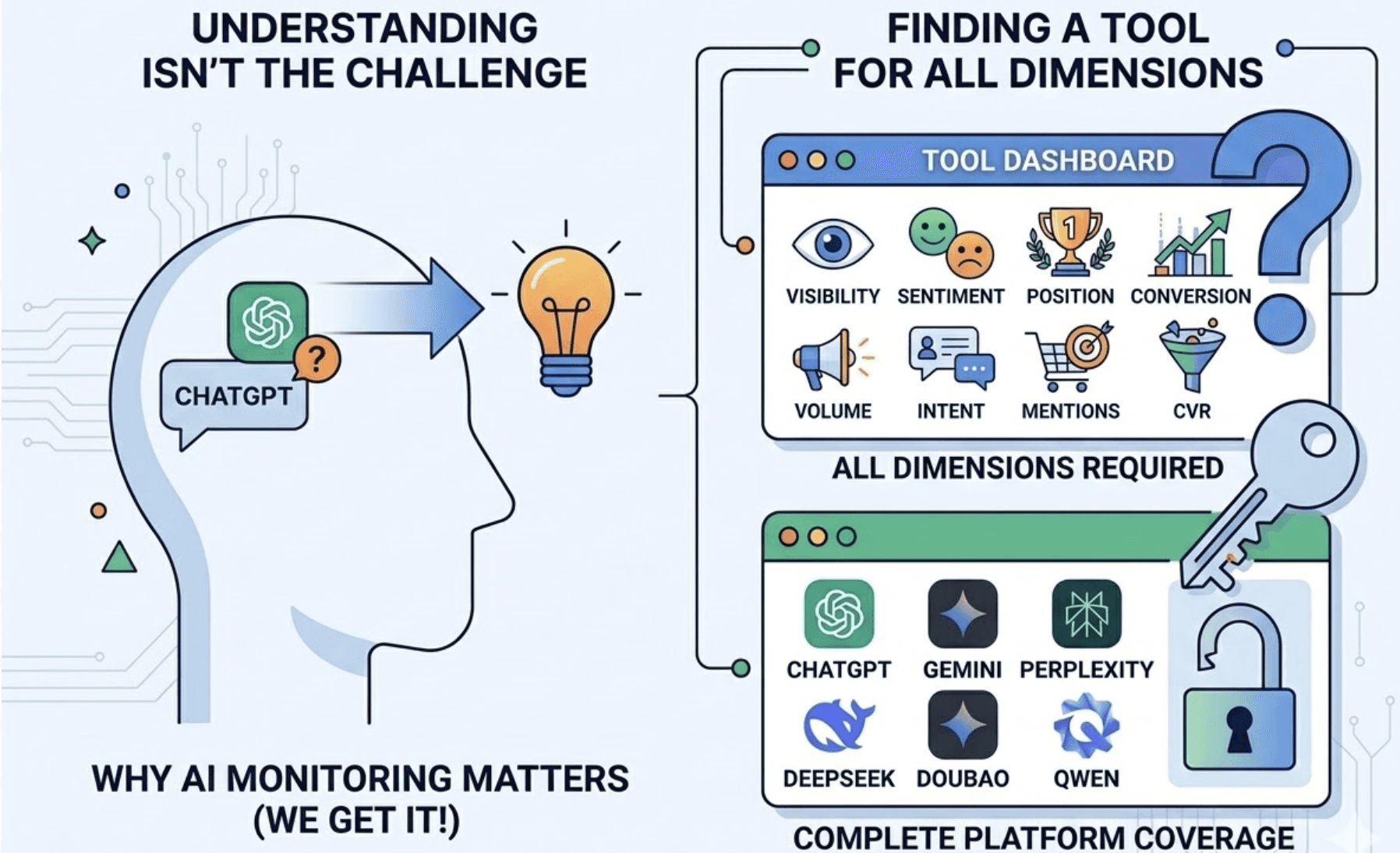

The challenge most teams face isn't understanding why AI answer monitoring matters. It's finding an AI answer monitoring tool that actually covers all the dimensions the problem requires.

Topify tracks all seven core metrics — Visibility, Sentiment, Position, Volume, Mentions, Intent, and CVR — across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms from a single dashboard. Built by founding researchers from OpenAI and Google SEO practitioners with Fortune 500 track records, the platform was designed specifically for the non-deterministic nature of generative search.

A few features worth knowing:

Competitive benchmarking in real time. Topify automatically detects which competitors the AI is recommending alongside or instead of your brand, and shows you exactly which prompts they're winning. No manual setup required.

Near-Top 3 gap analysis. The platform identifies prompts where your brand is close to breaking into the AI's top recommendations — the highest-ROI content opportunities on your list.

Source analysis at scale. Topify shows which external domains AI platforms are citing when describing your category. If your brand's own content isn't in that citation set, you know exactly where to focus.

One-click strategy execution. State your goal in plain English. Review the proposed strategy. Deploy. The system handles the execution without manual workflows.

Pricing starts at $99/month for the Basic plan (100 prompts, 9,000 AI answer analyses, 4 projects), with the Pro plan at $199/month (250 prompts, 22,500 analyses, 10 seats) and Enterprise from $499/month for custom coverage and dedicated support.

For teams evaluating AI answer monitoring software, the practical benchmark is simple: can the tool tell you not just ifyour brand is visible, but how it's being described, where that description comes from, and what to do about it? That's what separates a monitoring dashboard from a monitoring system.

Conclusion

The shift from traditional search to generative synthesis isn't coming. It's here. And the gap between teams with a structured AI answer monitoring strategy and those without is widening every month.

Visibility is table stakes. The real question is whether the AI is describing your brand with accuracy, positive intent, and relevance to the prompts that drive your business.

You can't manage what you're not tracking. And right now, most brands still aren't tracking the right things.

FAQ

What is an AI answer monitoring dashboard? An AI answer monitoring dashboard is a tool that tracks how AI platforms like ChatGPT, Gemini, and Perplexity generate, describe, and position your brand in their responses. Unlike traditional analytics, it measures not just whether your brand appears, but the sentiment, accuracy, position, and citation quality of every AI-generated mention.

How do I measure the effectiveness of my AI answer monitoring strategy? Start with a baseline Visibility Score and Sentiment Score across your core prompts. Track week-over-week changes in both metrics, and correlate improvements with specific content updates. An effective AI answer monitoring system will show you which content changes caused which visibility shifts.

What's the difference between AI answer monitoring tools and traditional SEO tools? Traditional SEO tools track rankings, backlinks, and keyword positions on search engine results pages. AI answer monitoring tools track how AI systems synthesize and describe your brand in generated responses — a fundamentally different measurement problem that existing SEO tools were not built to solve.

How much does AI answer monitoring software cost? Pricing varies by platform and coverage depth. Topify's AI answer monitoring platform starts at $99/month for the Basic plan, $199/month for Pro, and from $499/month for Enterprise. Entry-level plans cover core visibility and sentiment tracking; higher tiers add competitive benchmarking, source analysis, and custom prompt coverage.

What's the best AI answer monitoring platform for small teams? For small teams, prioritize platforms that offer cross-platform coverage (at least ChatGPT, Gemini, and Perplexity), built-in sentiment analysis, and a clear path from monitoring data to content action. Topify's Basic plan at $99/month is designed for individual brands and SMBs that need structured AI visibility data without enterprise overhead.