Search for "best [your category] tool" on ChatGPT right now. Your competitor appears. You don't. You check your GEO dashboard and it shows solid mention counts across the past 30 days.

Both things are true at the same time. That's not a data glitch. That's a tracking gap.

Most AI search optimization platforms were built to answer one question: "Is our brand getting mentioned?" That's the wrong question. The right question is: "Are we being mentioned, in the right AI platforms, in the right model versions, in the right languages, at the right stage of the buyer's journey?" Most tools can't answer any of those.

Here's what's actually missing.

Most AI Search Optimization Tools Only Track What's Easy to Measure

The default dashboard for most GEO tools looks reassuring: mention counts, sentiment scores, a graph trending upward. The problem is those numbers often represent a single platform, a single language, and a single point in time.

ChatGPT holds roughly 80% of AI search visits, but Perplexity's user base skews heavily toward high-income professionals, with 65% of its users classified as senior white-collar workers. Google Gemini pulls from its own knowledge graph and YouTube ecosystem, producing citation patterns that look nothing like ChatGPT. DeepSeek and Microsoft Copilot operate on separate architectures entirely.

A brand can rank first in ChatGPT responses for a category query and be completely absent from Perplexity's answer to the identical prompt. That happens because the two platforms weight sources differently: Perplexity favors real-time crawling and research papers, while ChatGPT leans on Bing index data and its training corpus.

That's not a gap you can spot if your platform only tracks one engine.

Why AI Model Versions Make Your Visibility Data Expire

This is the tracking dimension that almost no one monitors, and it's quietly responsible for some of the sharpest brand visibility drops in the past 18 months.

AI models aren't updated continuously. They update in jumps. GPT-4o (May 2024, knowledge cut-off October 2023), OpenAI o1 (September 2024), and GPT-5.4 (March 2026, cut-off August 2025) each incorporate a new training data snapshot. The internal weighting of authority signals shifts with each version. A brand that built strong third-party coverage in 2023 might find that weight fading in GPT-5.4 as the model prioritizes fresher signals from 2024 and 2025.

The retrieval behavior also changes. GPT-4o-Search consults an average of 4.1 web pages to answer a query. Google AI Overview consults 8.6. The threshold for being included in a summary is materially different across model versions, and it changes every time a major update ships.

Without version-specific tracking, you can't tell whether a visibility drop happened because your content strategy changed or because a model update shifted the citation ecosystem. You're diagnosing a patient without knowing which doctor they saw.

Platforms built for serious AI search optimization need to log which model version generated each response, so teams can detect visibility decay before it compounds.

One Brand, Many Realities: Regions and Languages Aren't Optional

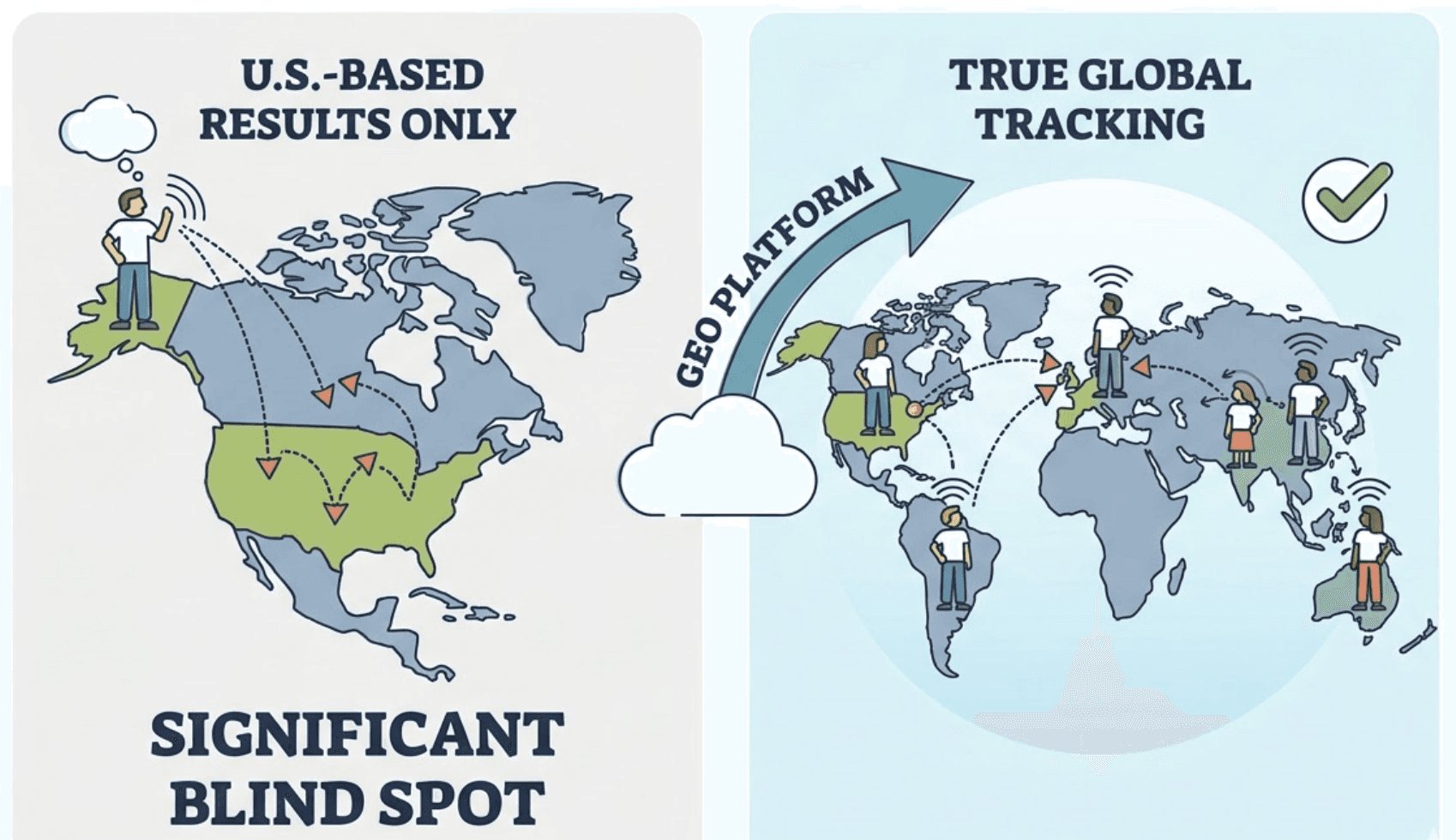

Most GEO platforms run English-language prompts against US-based results and call it global tracking. For any brand with international ambitions, that's a significant blind spot.

The citation patterns across regions vary dramatically. The Netherlands sees a 54.5% localized citation rate using .nl domains. Germany sits at 44.6% (.de). France at 35.3% (.fr). The UK, surprisingly, gets pulled into global .com citations 94% of the time. Each market has a dominant platform, too: Copilot leads in Germany, Grok in France, Perplexity in the Netherlands.

The translation data is even more striking. Analysis of over 1.3 million citations found that translated websites achieved a 327% increase in visibility within Google AI Overviews compared to their untranslated counterparts. A site that ranks well in English becomes nearly invisible when an international user queries the same topic in their native language.

In China, the landscape is structurally separate. ByteDance's Doubao reached 515 million users by mid-2025 with a 36.5% population penetration rate. Alibaba's Qwen and DeepSeek operate with training data and citation priorities that differ entirely from Western platforms. A brand that isn't tracking visibility across these regional ecosystems doesn't have a global picture. It has a US picture.

Topify covers DeepSeek, Doubao, and Qwen alongside ChatGPT, Gemini, and Perplexity precisely because the "AI search" market isn't one market. It's several, each with its own citation logic.

Google AI Overview Needs Its Own Tracking Layer

Google AI Overview sits at the intersection of traditional SEO and GEO, and it's the most commercially consequential placement in AI search right now. It's also the one most GEO platforms under-track.

The click economics around AIO are unambiguous. Organic CTR for queries that trigger an AI Overview dropped 61%between 2024 and late 2025, falling from 1.76% to 0.61%. If your brand isn't cited in the AIO box, you absorb most of that decline. If you are cited, the dynamic reverses: 35% higher organic CTR and 91% higher paid CTR compared to brands present on the page but not in the AIO citation set.

What makes AIO tracking technically distinct is that citation in the box doesn't correlate with traditional ranking. 80% of the sources cited in e-commerce AI Overviews don't rank in the top three organic positions. AIO visibility is driven by machine extractability, structured data, and content format, not domain authority. A brand can have a DA of 80 and still get cut out of the AIO if its content isn't parsed cleanly.

Topify's Source Analysis feature tracks the exact domains and URLs that AI platforms are citing, including within the AIO layer. That's the signal that tells you whether your content is actually reaching the summary box, not just ranking near it.

Awareness, Consideration, Decision: Three Visibility Metrics, Not One

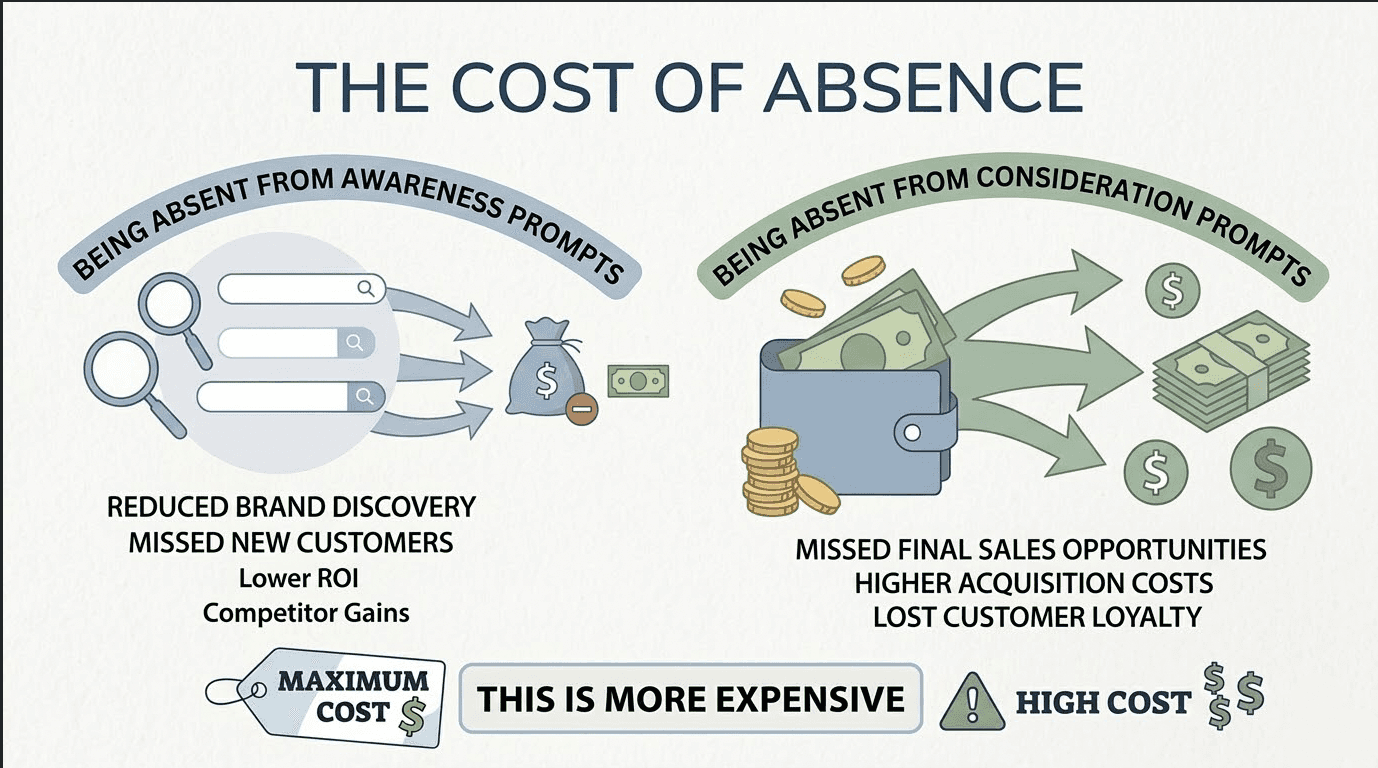

Counting total brand mentions is like reporting total website visits without segmenting by page or intent. The number looks healthy. The strategic picture it creates is misleading.

In AI search, the funnel stage of a prompt determines the commercial value of appearing in the answer. Informational queries ("what is the best way to improve SaaS discoverability") trigger 88% of all AI Overviews and reach the widest audience. They matter for brand awareness, but a mention there rarely produces a conversion signal. Consideration-stage prompts ("compare Topify vs. SE Ranking for AI search optimization") are where actual brand selection happens. Decision-stage prompts ("is Topify worth the $99/mo for an agency") are where revenue-intent visibility lives.

Being absent from consideration prompts is more expensive than being absent from awareness ones.

Perplexity's user demographic makes this point concrete. That platform's 65% high-income professional base isn't using it for general curiosity. They're using it to shortlist vendors, compare tools, and validate purchasing decisions. A brand that appears in ChatGPT's general answers but not in Perplexity's comparison responses is losing the evaluation stage to competitors, often without anyone on the marketing team noticing.

A GEO platform that only reports total mentions can't tell you which funnel stage you're winning or losing. Topify's AI search analytics segment visibility by intent, so teams can identify whether their content gap is at awareness, consideration, or the decision stage, and optimize accordingly.

What AI Search Intelligence Actually Looks Like in Practice

The five tracking dimensions above aren't a checklist. They're a picture of what "AI search visibility" actually means when you decompose it.

Topify was built to track all five in a single platform. The coverage spans ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and Google AI Overview. The analytics layer reports across seven metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. Version-aware tracking logs which model generated which response. Regional and language segmentation surfaces the gaps that English-only monitoring hides.

The Source Analysis feature maps which third-party domains AI platforms are citing for your category, which is important given that 89% of AI citations come from earned media and 40% of all AIO citations come from Reddit. If your brand isn't showing up in the sources AI models trust, no amount of on-site optimization will fix that.

Topify's Basic plan starts at $99/month with a 30-day trial, covering ChatGPT, Perplexity, and AI Overviews with up to 100 tracked prompts. For teams managing multiple brands or needing deeper competitive coverage, the Pro plan at $199/month scales to 250 prompts and 10 seats. Get started here.

Conclusion

The question isn't whether your brand is being mentioned in AI search. It's whether you're being mentioned in the right AI platforms, across the right model versions, in the languages your actual buyers are using, at the stage of their journey where decisions get made.

Most platforms answer the first question. The brands pulling ahead in AI search are the ones answering all of them.

Audit your current tool against those five dimensions. If it can't answer them cleanly, you're not tracking AI search optimization. You're watching a partial feed and assuming it's the full picture.

FAQ

Q1: What's the difference between AI search optimization and traditional SEO?

Traditional SEO focuses on ranking pages within a list of links on Google, prioritizing keywords and backlinks. AI search optimization (GEO) focuses on becoming a cited source within AI-generated summaries, which means prioritizing content extractability, third-party authority signals, and structured data that AI models can parse and reproduce accurately.

Q2: Do I need to track every AI platform, or just ChatGPT?

ChatGPT leads with roughly 80% of AI search visits, but Perplexity and Google Gemini have meaningfully different retrieval behaviors and user demographics. Perplexity skews heavily toward high-income decision-makers. Google AIO has the most direct impact on organic CTR. Tracking multiple engines is the only way to identify where competitors are being cited while you aren't.

Q3: How often do AI model versions change, and does it affect brand visibility?

Major model updates happen every few months, and each one incorporates a new training data snapshot. When a model's knowledge cut-off shifts, the citation weight of older content often decays. A brand that built strong third-party coverage in 2023 may find that signal fading in models trained on 2024-2025 data. Version-specific tracking lets you catch that decay early.

Q4: Can one platform track Google AI Overview and conversational AI search at the same time?

Yes. Advanced GEO platforms like Topify integrate tracking for both conversational AI engines and Google AI Overviews within a single dashboard. Given that AIO citation produces a 35% organic CTR lift and a 91% paid CTR lift compared to non-cited brands on the same page, tracking both in parallel is worth the setup.