Your technical white paper ranks #1 on Google for a high-intent query. A buyer types the exact same question into ChatGPT. The AI recommends three competitors. Your page doesn't appear.

That's not an SEO failure. That's an AI citation gap.

Search rankings and AI citations are now two separate systems. What gets you to the top of Google doesn't guarantee you'll be sourced by ChatGPT, Perplexity, or Gemini. And in a world where over 50% of queries are satisfied directly within the AI interface, the citation has become the new click.

AI citation tracking analytics is the discipline built to close that gap.

What AI Citation Tracking Measures (It's Not the Same as Brand Mentions)

Most brands track whether AI mentions their name. That's the wrong metric.

There's a meaningful difference between being "mentioned" and being "cited." A mention means your brand name appears somewhere in the AI's generated text. A citation means the AI used your content as an evidentiary source, typically with a clickable link or footnote pointing directly to your domain.

These two signals tell you completely different things:

Signal | What It Means | Strategic Value |

|---|---|---|

Brand Mention | Your name appeared in the AI's narrative | Awareness, consideration shortlist |

AI Citation | Your URL was used as a source | Technical authority, referral traffic potential |

Here's the thing that catches most teams off guard: brands are three times more likely to be cited as a source than to be both cited and mentioned as a recommendation. You can power an AI's answer without ever getting credit for it.

Researchers have formalized this as the "Mention-Source Divide." The AI uses your data. It recommends your competitor. Organizations that achieve both signals simultaneously are 40% more likely to resurface in consecutive AI sessions, creating a compounding visibility advantage over time.

How AI Platforms Decide Which Sources to Cite

AI citation selection isn't random. It's risk minimization at scale.

Most production-grade AI search systems use Retrieval-Augmented Generation (RAG): they query a live index, retrieve relevant passages, and ground their generated answer in those specific texts. In this environment, the primary ranking factor is token efficiency, which is the density of factual information per unit of text.

AI engines frequently skip the #1 Google result if the page is cluttered with introductory fluff or lacks clear structure. Instead, they cite a lower-ranking page that offers a direct definition, a concise table, or what researchers call an "atomic fact," meaning a self-contained sentence making a single, verifiable claim.

The data backs this up:

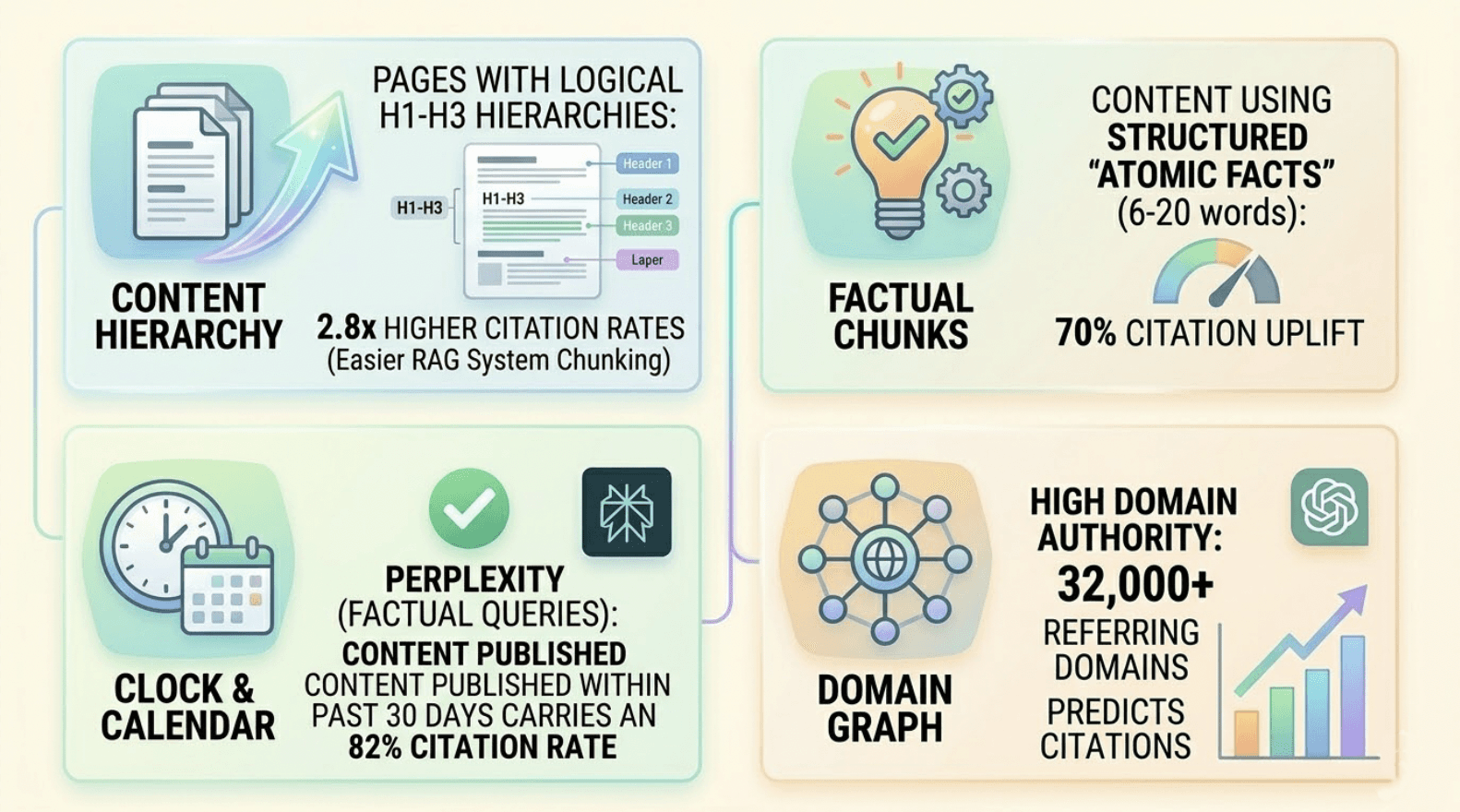

Pages with logical H1-H3 heading hierarchies see 2.8x higher citation rates due to easier chunking by RAG systems

Content using structured "atomic facts" (6-20 words) receives a 70% citation uplift

On Perplexity, content published within the past 30 days carries an 82% citation rate for factual queries

High domain authority (benchmark: 32,000+ referring domains) is a significant predictor of ChatGPT citations

Platform behavior also varies considerably. ChatGPT cites an average of only 1.5 to 7.9 sources per response and heavily favors encyclopedic authorities (Wikipedia accounts for 47.9% of its top citations). Perplexity operates differently, often referencing 21+ sources per response with a strong bias toward recent and community-validated content. Google AI Overviews maintains a 93.6% overlap with traditional top-10 results but skews toward its own ecosystem properties.

One SEO strategy can't cover all three. That's why cross-platform citation tracking matters.

5 Signs Your Brand Has an AI Citation Gap

You don't always need a dashboard to know something is wrong. These patterns are often visible before any formal audit.

Competitive displacement in evaluative queries. When an AI is asked to "compare the top solutions in your category," it cites competitor domains even though your brand ranks higher in traditional search.

Ranking inconsistency across search layers. Your content sits in the top 1-3 positions on Google, but the AI Overview or ChatGPT Search result for the same keyword ignores your domain entirely.

Third-party attribution bias. The AI references data or a framework your brand originated, but credits a secondary publisher, such as a news outlet or a review site like G2 or Reddit, because they score higher in the model's citability index.

The mention-only anomaly. Your brand name appears in a synthesized recommendation list, but there's no clickable link pointing back to your site. Your brand is in the training data, but your domain isn't treated as an authoritative RAG target.

Recurring competitor citations for niche topics. A competitor is repeatedly cited for a specific subtopic where you have exhaustive coverage. The AI has mapped them as the topical authority, not you.

Any one of these signals warrants a structured audit. All five together indicates a systemic gap.

How to Measure AI Citation Tracking Analytics

Measuring citation performance requires a shift from tracking keywords to tracking prompts and their synthesized outputs.

The Core Metrics

Three KPIs form the foundation of any serious citation analytics program:

Citation Frequency: The percentage of target prompts where your domain or specific URL is cited. A citation frequency above 30% for core category prompts is generally considered a benchmark for market leadership.

Domain Citation Share of Voice (C-SOV): Your brand's total citations as a percentage of all citations granted across a defined competitor set for the same prompt library.

C-SOV = (Brand Citations / Total Citations in Category) × 100

Platform Coverage: The degree to which your brand maintains citation presence across ChatGPT, Perplexity, and Gemini simultaneously. Only 11% of domains appear across both ChatGPT and Perplexity for identical queries, making cross-platform consistency a rare and meaningful signal.

Manual Tracking vs. Automation

Manual audits, running 20-30 prompts across platforms, are useful for establishing a baseline. But they don't scale.

Manual tracking suffers from "temperature variance," where the same prompt produces different citations in different sessions. It also can't surface what researchers call "Dark Queries," the hidden intents that trigger AI answers for your category but that you haven't thought to test.

Automation enables "probabilistic synthetic probing": running hundreds of prompts across multiple models and regions to calculate a stable probability of citation. This is the difference between a one-off data point and a defensible trend line.

Topify was built specifically for this layer of measurement. Its Source Analysis feature identifies which URLs from your domain are being picked up by AI crawlers, maps competitor citation share against your prompt library, and automatically clusters queries where AI Overviews are prominent. The Basic plan ($99/mo) covers 100 prompts and 9,000 AI answer analyses across ChatGPT, Perplexity, and AI Overviews. The Pro plan ($199/mo) scales to 250 prompts and 22,500 analyses, with the Enterprise tier (from $499/mo) offering custom configurations for larger organizations.

The jump from manual to automated isn't just about convenience. It's about having data stable enough to build strategy on.

Common Mistakes in AI Citation Tracking Analytics

Even teams that understand the importance of citation tracking tend to fall into predictable traps.

Tracking mentions instead of citations. Only 28% of brands achieve both mentions and citations simultaneously. Focusing only on name-drops generates brand awareness data while missing the traffic-driving potential of actual citation links.

The single-platform trap. Optimizing exclusively for ChatGPT is a strategic error. Given that only 11% of cited domains overlap between ChatGPT and Perplexity for identical queries, visibility on one platform does not transfer to the other.

No baseline, no benchmark. Without a starting point, teams can't measure what's actually working. "Citation drift," the natural volatility of AI responses over time, is only identifiable if historical data exists to compare against.

Treating citation tracking as a one-time audit. 76% of content cited in ChatGPT was updated within the prior month. Freshness is a primary driver of citations in high-intent queries. Static snapshots decay fast.

Ignoring competitor citation trends. Your own citation share is only half the picture. If a competitor's share is growing for prompts in your category, that's an early warning signal worth catching before it compounds.

A Working Strategy for AI Citation Tracking Analytics

A four-step cycle turns citation data into an actionable growth channel.

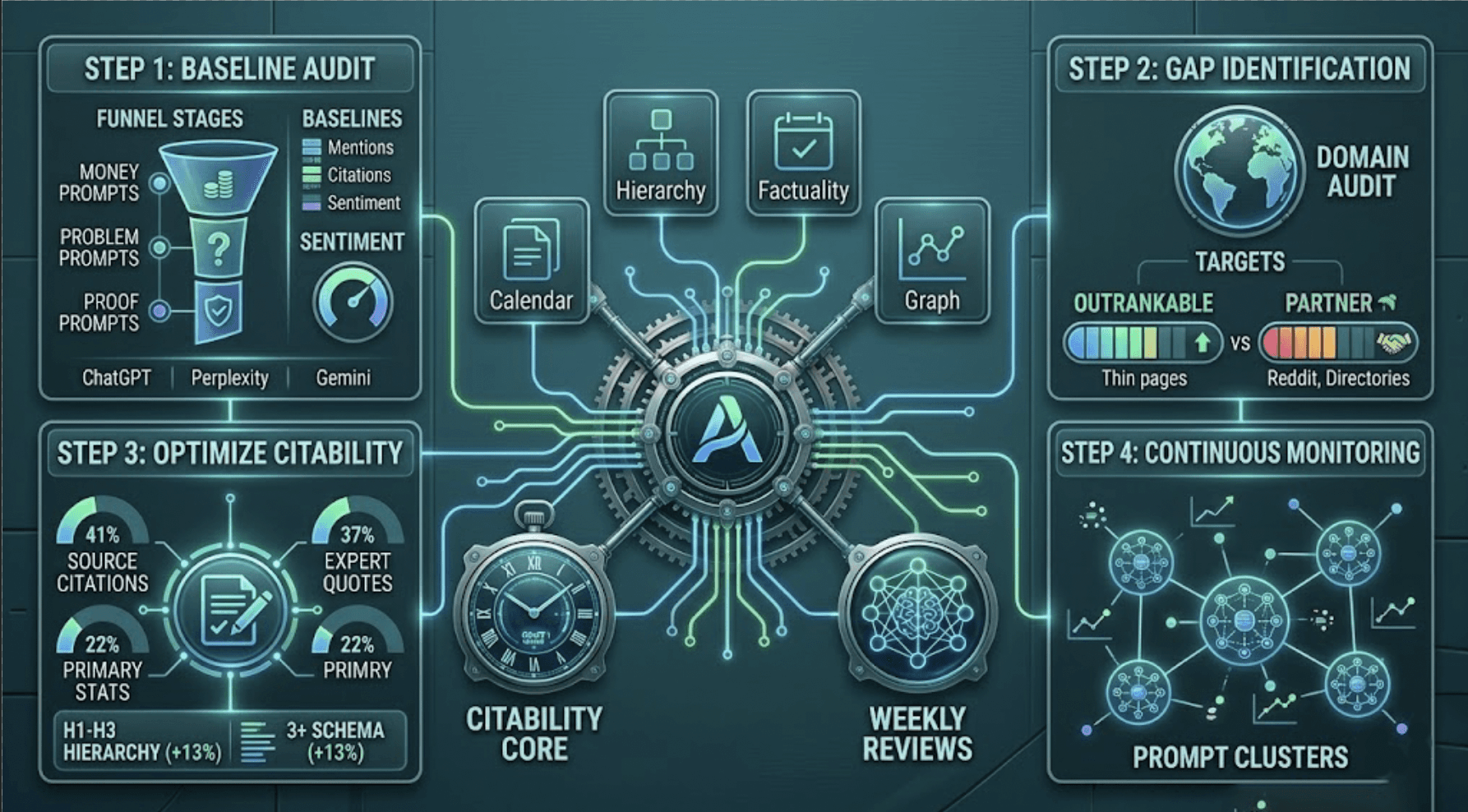

Step 1: Baseline audit. Build a prompt portfolio categorized by funnel stage: "money prompts" (best solutions in your category), "problem prompts" (how to solve the issue your product addresses), and "proof prompts" (compliance, security, use cases). Record baseline mention rates, citation rates, and sentiment distribution across ChatGPT, Perplexity, and Gemini.

Step 2: Citation gap identification. Analyze which domains are being cited for your target prompts. Split them into "outrankable" targets (thin competitor pages with weak structure) and "partner" targets (directories or communities like Reddit that are harder to displace but can be contributed to). The goal is understanding why the AI trusts those sources more.

Step 3: Optimize for citability. Research from Princeton's GEO study identified three content interventions that significantly boost citation probability: adding citations to other authoritative sources within your content (+41% citation uplift), incorporating specific expert quotes (+37%), and adding primary statistics (+22%). Technical improvements also matter: a strict H1-H3 hierarchy and 3+ types of schema markup increase citation likelihood by 13%.

Step 4: Continuous monitoring. Weekly reviews of prompt clusters allow teams to detect citation drift and respond to new competitor entries or platform sourcing changes. AI models update frequently; a citation position held today isn't guaranteed next month.

Topify's one-click execution layer connects this strategy directly to action. Once Source Analysis identifies which content is underperforming, the platform's AI agent can propose and deploy targeted GEO updates without manual workflows.

Best Tools for AI Citation Tracking Analytics

The market for AI brand visibility software has matured enough that teams now have meaningful choices across budget and use case.

Platform | Key Strength | Best For |

|---|---|---|

Topify | Source Analysis, 250+ prompt tracking, GSC integration, competitor gap analysis, one-click execution | SaaS and e-commerce brands running structured GEO programs |

Profound AI | 6.8M+ citation dataset, enterprise brand alignment | Fortune 500 companies needing large-scale compliance tracking |

Otterly AI | Weekly insights, 400+ prompt monitoring, affordable entry point | SMBs and agencies starting out |

SEMrush AIO Toolkit | Traditional SEO integration, mention-source divide reports | Existing SEMrush users expanding to AI visibility |

SE Ranking | AIO tracker, Google AI Overview focus | SEO teams prioritizing AI Overview visibility |

Among the AI search visibility software options, Topify is differentiated by the combination of Source Analysis and competitor benchmarking in a single platform. Where most tools surface citation data, Topify maps the gap between where you are and where competitors are being cited, then connects that insight to execution.

Pricing scales from the Basic tier at $99/mo for teams beginning their AI citation tracking program, to Pro at $199/mo for more comprehensive prompt libraries, to Enterprise from $499/mo for dedicated account management and custom configurations.

For teams that need managed execution alongside measurement, Topify's service plans range from $3,999/mo (Standard) to $5,999/mo (Enterprise), covering prompt strategy, content production, and monthly reporting cycles.

Conclusion

The shift from search engines to answer engines hasn't just changed where buyers find information. It's changed what determines whether your brand is part of the answer at all.

AI citation tracking analytics is how you measure that. Citation frequency, domain citation share, and cross-platform coverage give you a data-driven picture of your brand's authority in the AI ecosystem, separate from and often divergent from your traditional search rankings.

The brands that will hold ground in the next wave of AI-referred traffic aren't necessarily the ones with the most content or the highest domain authority. They're the ones who know exactly where they're being cited, where they're being displaced, and what to do about it.

As AI-referred traffic converts at rates up to 4.4 times higher than traditional organic search, measurement is no longer optional. It's the starting point.

FAQ

What is AI citation tracking analytics? It's the systematic measurement of how often and where AI platforms (ChatGPT, Perplexity, Gemini) link to and reference your content as a source in their generated answers, distinct from simply tracking brand name mentions.

How does AI citation tracking analytics work? It involves running systematic sets of prompts across multiple AI models, extracting the cited URLs from each response, and analyzing them for citation frequency, share of voice, and competitive positioning. Automated platforms like Topify run hundreds of prompt variations to generate statistically stable visibility scores.

How to improve AI citation tracking analytics? Focus on content "citability": add references to authoritative sources within your content (+41% citation uplift), incorporate specific statistics (+22%) and expert quotes (+37%), maintain a clean H1-H3 heading hierarchy, and keep content fresh. 76% of content cited in ChatGPT was updated within the prior month.

Examples of AI citation tracking analytics? Measuring your Citation Share of Voice (C-SOV) across the CRM category. Tracking whether your brand achieves both mentions and citations on the same prompts. Identifying "Dark Queries," high-intent prompts in your category where your domain has zero citation presence.

Checklist for AI citation tracking analytics:

Define a prompt portfolio of 100+ queries across awareness, consideration, and decision stages

Audit baseline citation and mention rates across ChatGPT, Perplexity, and Gemini

Calculate your Domain Citation Share of Voice against 3-5 direct competitors

Identify competitor citation gaps and "source target" opportunities

Optimize content structure (schema markup, H1-H3) and factual density

Implement automated monitoring to track weekly citation trends

What does AI citation tracking analytics cost? Basic prompt monitoring tools start around $29-$99/mo. Enterprise-grade platforms offering cross-platform audits and statistical modeling typically range from $499-$2,000+/mo. Topify's tiers run from $99/mo (Basic, 100 prompts) to $199/mo (Pro, 250 prompts) to $499+/mo (Enterprise), with managed service plans available from $3,999/mo for teams that want full execution alongside measurement.