Your domain authority is solid. Your keyword rankings haven't moved in months. But someone on your team just asked Perplexity for the best tool in your category, and your brand wasn't in the answer. Not mentioned once.

Traditional rank tracking can't flag that. It wasn't built to. And the gap between what your SEO dashboard shows and what AI systems are actually saying about you is widening every week.

What Is AI Answer Tracking (And Why It's Not the Same as SEO Monitoring)

AI answer tracking is the systematic process of monitoring how frequently, in what context, and with what sentiment a brand appears in the synthesized responses of generative AI systems like ChatGPT, Perplexity, and Gemini.

That's a meaningful distinction from traditional SEO monitoring. In a standard SERP, you occupy a position in a list. Users still decide whether to click. In an AI answer, the model speaks on behalf of your category. It selects sources, summarizes them, and delivers a recommendation. 68% of users now prefer a single AI-curated response over a list of links, which means the ranking is invisible to the user. What they see is the conclusion.

That's the strategic shift. AI answer tracking is less about position in a list, more about whether you're included in the conclusion at all.

There's also a brand safety dimension most teams overlook. When a traditional search engine ranks your page, your content speaks for itself. When an LLM references your brand, it paraphrases. It reconstructs your value proposition from dozens of sources across the web. If those sources are inconsistent or outdated, the model may describe your product inaccurately. AI answer tracking gives you the data to catch that before it reaches buyers.

How AI Answer Tracking Strategy Works: The 3-Layer Model

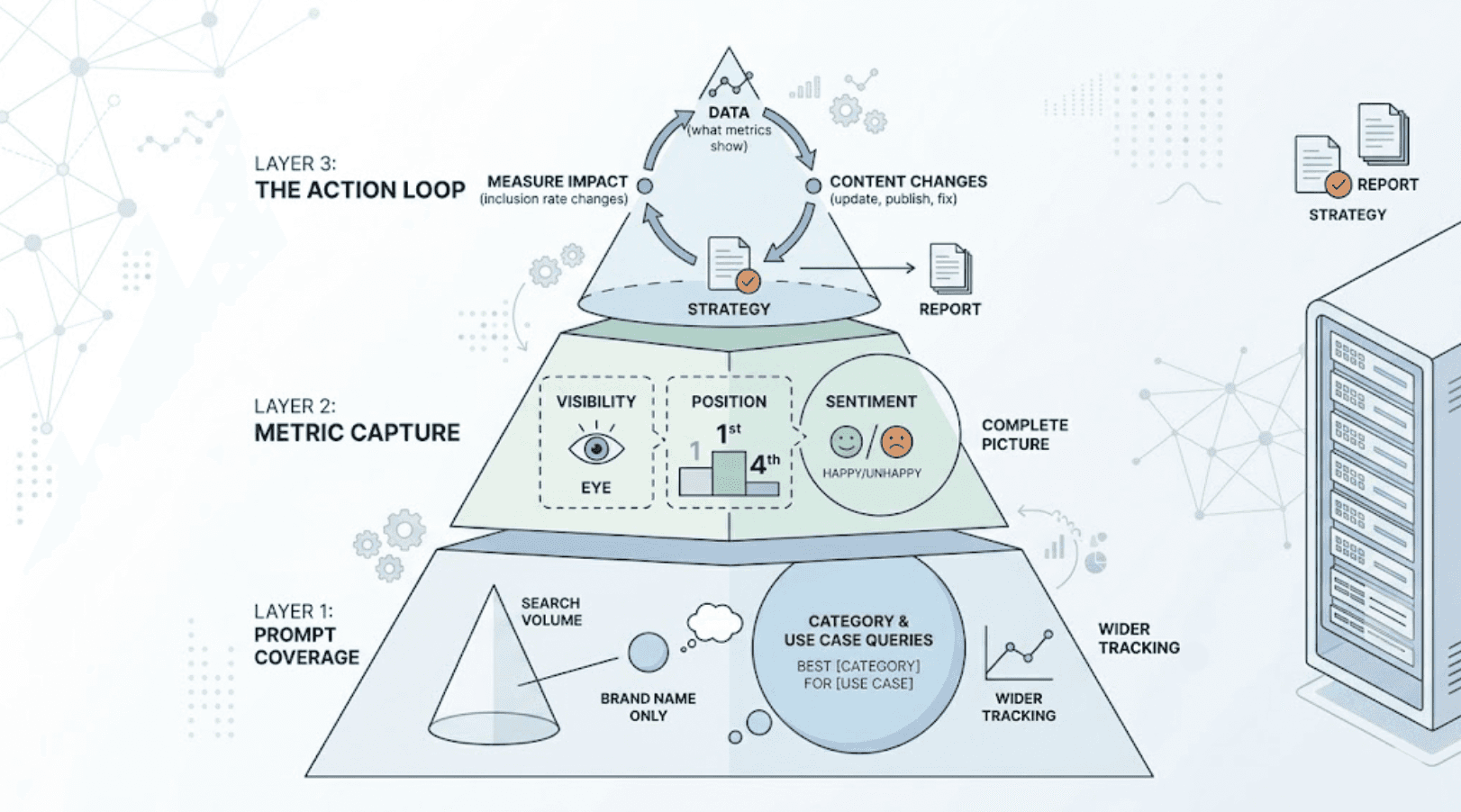

A functional AI answer tracking strategy runs on three layers. Skip any one of them and you're left with partial data that won't tell you what to do next.

Layer 1: Prompt Coverage. Which queries are you tracking? Most brands start by monitoring their own brand name. That's too narrow. The high-value queries are category-level: "what's the best [your category] tool for [use case]." These are the prompts where buyers form opinions before they've heard of you.

Layer 2: Metric Capture. Once you know which prompts to track, you need to measure across at least three dimensions: visibility (are you mentioned?), position (are you the first recommendation or the fourth?), and sentiment (is the AI characterizing your brand accurately and positively?). Tracking only one of these gives you an incomplete picture.

Layer 3: The Action Loop. Data without a feedback mechanism is a report, not a strategy. The action loop connects what the data shows to specific content changes: updating an existing page, publishing a new "answer-first" content block, or fixing inconsistent brand descriptions across third-party sources. The loop then measures whether those changes moved your inclusion rate.

This is how AI answer tracking moves from monitoring to optimization.

Perplexity AI Rankings Aren't Optional Anymore: Here's Why They Need Their Own Tracking

Most teams, when they start tracking AI visibility, focus on ChatGPT. That's understandable. It's the most recognized platform. But it's also the wrong place to concentrate all your attention.

Perplexity reached over 780 million monthly queries by mid-2025, and its user base skews toward high-intent, technically sophisticated users who are often further along in their decision process. When those users ask Perplexity for a recommendation, they're often days or hours from making a purchase.

Perplexity's citation mechanism also works differently from ChatGPT's. It crawls the web in real time, running approximately 59 ranking signals to determine which sources to cite. Domain authority matters: sources with 32,000+ referring domains see citation rates nearly double compared to lower-authority domains. Freshness matters: content updated for 2026 or showing recent timestamps earns preferential treatment. And Perplexity shows a strong preference for "Top 10" style lists that rank well on Google, using them as a proxy for trusted comparative information.

To monitor Perplexity AI rankings effectively, you need a platform that tracks citations specifically, not just brand mentions. Perplexity answers typically compress citations to 2 to 7 sources per response. Appearing in that set consistently is a different challenge from appearing in a ChatGPT summary. To track Perplexity AI rankings alongside ChatGPT and Gemini from a single dashboard, Topify covers all three platforms plus DeepSeek and others, with position tracking that shows whether you're the first citation or the last.

5 Metrics That Actually Tell You If Your AI Answer Tracking Strategy Is Working

Not all metrics are equally actionable. Here's the set that experienced practitioners use to evaluate whether an AI answer tracking strategy is producing results.

Metric | What It Measures | Why It Matters |

|---|---|---|

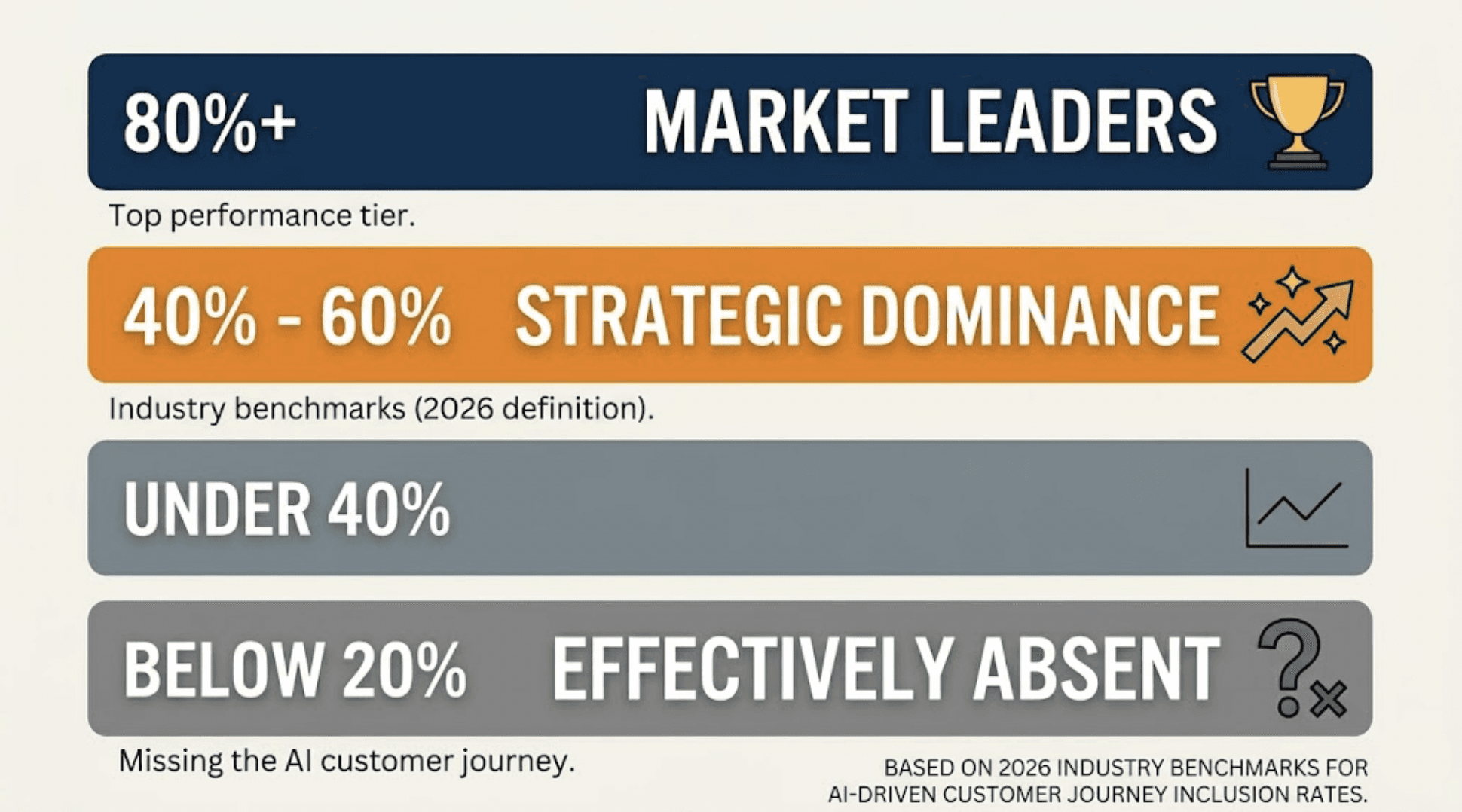

Answer Inclusion Rate | % of relevant prompts where your brand appears | Below 20% signals functional invisibility in AI-driven discovery |

Position | Where you rank within the AI answer (first mention vs. later) | First-mentioned brands receive disproportionately more consideration |

Sentiment Score | Whether the AI characterizes your brand positively, neutrally, or negatively | Detects narrative drift and inherited negative bias from training data |

Citation Share | % of clickable source links pointing to your domain | Measures authoritative presence, not just passing mention |

Model Consensus Score | Whether your visibility is consistent across ChatGPT, Perplexity, Gemini | Fragmented signals indicate inconsistent authority across the web |

Industry benchmarks from 2026 define strategic dominance as an inclusion rate between 40% and 60%, with market leaders targeting 80%. If you're below 20%, you're effectively absent from the AI-driven customer journey.

On the conversion side, the ROI case for prioritizing these metrics is concrete. Users referred from AI platforms visit an average of 13 pages per session versus 11.8 for Google, and show a 4.4x higher conversion rate than traditional organic traffic. That's not a marginal gain.

The metric most teams underinvest in is model consensus. A brand can appear frequently in ChatGPT and almost never in Perplexity, which points to a specific technical or content gap rather than a general visibility problem. Tracking consensus across platforms is how you find out which gap to close first.

4 Common Mistakes That Break Your AI Answer Tracking Strategy Before It Starts

Understanding the mistakes is often faster than deriving the strategy from scratch. These four errors account for most of the underperformance brands see in their early AI tracking efforts.

Mistake 1: Tracking only your brand name, not category prompts. The queries that convert buyers aren't usually searches for your brand. They're searches like "what should I use for X" or "best tool for Y." If your tracking prompt set doesn't include these, you're measuring awareness, not discovery.

Mistake 2: Single-platform coverage. Only 16% of brands currently track AI search performance at all, and most of those track only one platform. Given that Perplexity, ChatGPT, and Gemini each have distinct citation mechanisms, a brand can have 60% inclusion on ChatGPT and near-zero on Perplexity, and miss the problem entirely by looking at only one source.

Mistake 3: Inconsistent entity identity across platforms. AI systems infer what a brand is by reading descriptions from across the web, including LinkedIn, G2, Reddit, press releases, and your own site. If those descriptions are inconsistent, for instance "AI marketing platform" in one place and "social media tool" in another, the model's confidence in the brand drops and it defaults to omitting you. This is one of the most common and least obvious causes of low inclusion rates.

Mistake 4: Technical extraction barriers. AI crawlers like PerplexityBot and GPTBot have specific requirements. If your robots.txt blocks these bots, or if your content is rendered entirely through JavaScript, the crawler can't read it. The model won't cite a source it can't parse, regardless of how good the content is.

That last point is worth dwelling on. A brand can have excellent content strategy and still be invisible to AI platforms because of a single line in a config file. AI answer tracking surfaces this problem. Without it, you'd spend months optimizing content that AI systems can't access in the first place.

The Right Platform to Track Perplexity AI Rankings and AI Answers at Scale

Once you've decided to run a systematic AI answer tracking strategy, the operational question is tooling. Running this manually, even for a focused prompt set of 50 to 100 queries across three platforms, isn't sustainable at a weekly cadence.

Topify covers the full tracking stack: visibility, position, sentiment, competitor benchmarking, and source analysis across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, and Qwen. For teams specifically looking to track Perplexity AI rankings, it tracks citation share at the domain level, which tells you not just whether you're mentioned but whether you're one of the 2 to 7 sources Perplexity actually links to.

In practice, a marketing team setting up AI answer tracking for the first time on Topify would typically start with 30 to 50 category-level prompts, establish a baseline across platforms within the first week, and use the competitor benchmarking view to identify which rivals are being cited in their place. That gap analysis directly informs the content and source strategy for the next 60 days.

The Source Analysis feature is worth highlighting separately. It traces which specific domains AI platforms are citing when they discuss your category, including competitor domains. That's how you find out whether a G2 profile, a Wired review, or a Reddit thread is what's pushing a competitor into first position. You can't close a citation gap you can't see.

On pricing, the Basic plan starts at $99/month (with a 17% saving on annual billing) and covers 100 prompts, ChatGPT, Perplexity, and AI Overviews tracking, across 4 projects. The Pro plan at $199/month extends to 250 prompts and 10 seats, which suits teams managing multiple brands or verticals. Enterprise starts at $499/month with a dedicated account manager and custom configuration. Full details are on the Topify pricing page.

For teams managing AI answer tracking at agency scale, the multi-project structure matters as much as the metric coverage.

Conclusion

Only 16% of brands currently track AI search performance systematically. That number will look very different in 18 months. By 2028, an estimated $750 billion in consumer spend is projected to move through AI-powered search engines, and brands that haven't built a tracking infrastructure will be working backward from a significant visibility deficit.

The strategic window for establishing an AI answer tracking baseline is open right now. Start with category-level prompts, measure across inclusion rate, position, and sentiment, and use source analysis to find the specific gaps driving competitor visibility. Getting that foundation in place is what separates brands that adapt from those that spend next year wondering why their organic performance numbers are flat.

Get started with Topify to set up your first AI answer tracking dashboard.

FAQ

Q: What is an AI answer tracking strategy? A: An AI answer tracking strategy is a structured approach to monitoring and improving how your brand appears in the synthesized responses of AI platforms like ChatGPT, Perplexity, and Gemini. It covers which prompts you're tracking, which metrics you're measuring (inclusion rate, position, sentiment, citation share), and how you use that data to improve your AI visibility over time.

Q: How often should I monitor Perplexity AI rankings? A: Weekly is the practical minimum. Perplexity crawls the web in real time and its citation patterns shift as new content is published and indexed. Monthly tracking will often miss meaningful changes and make it harder to attribute any movement to specific content actions.

Q: How is AI answer tracking different from traditional rank tracking? A: Traditional rank tracking measures where your URL appears in a list. AI answer tracking measures whether your brand is included in a synthesized, opinionated response, and how it's characterized. The mechanisms are different: traditional SEO is retrieval-based, while AI answers are generated through contextual synthesis. A brand can rank on page one of Google and be nearly invisible to Perplexity if its content isn't structured for extraction.

Q: What's the best tool for AI answer tracking strategy? A: For teams that need multi-platform coverage across ChatGPT, Perplexity, Gemini, and others in a single dashboard, Topify covers visibility, position, sentiment, competitor benchmarking, and source analysis together. The Basic plan starts at $99/month for 100 prompts and 4 projects, which is sufficient for most single-brand tracking setups.