You've done the work. Domain authority is solid. Keyword rankings are climbing. Google Search Console looks healthy. Then someone on the leadership team asks, "Are we showing up when people ask ChatGPT for a recommendation in our category?" and the room goes quiet.

The problem isn't your SEO strategy. It's that the metrics your team tracks every Monday morning were built for a different era of search, and they can't answer that question.

AI Search Optimization Is Not a Better Version of SEO

Most marketers initially assume GEO is just SEO with a new name. That assumption is expensive.

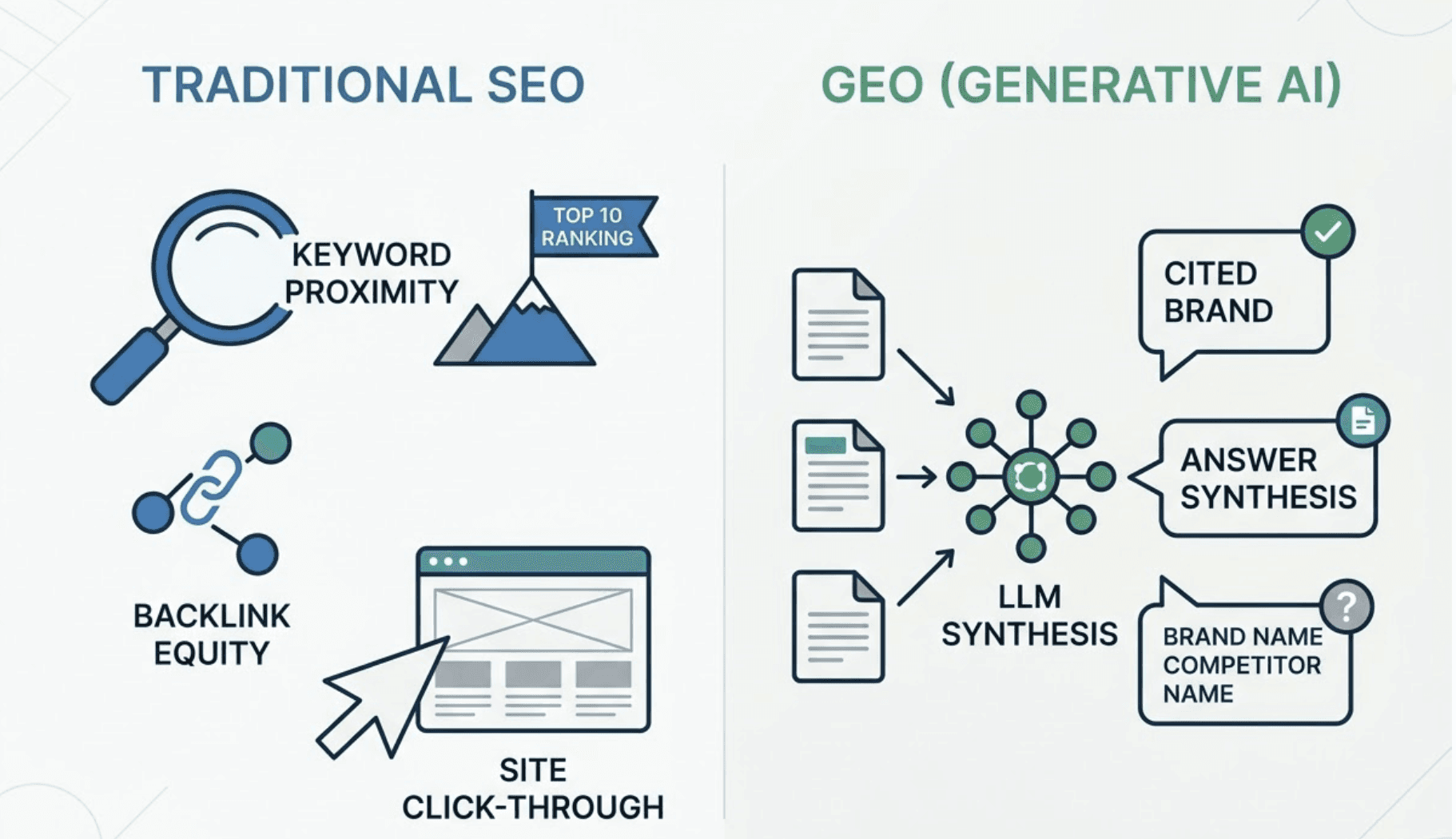

Traditional SEO optimizes for crawlers: clean indexing, backlink equity, keyword proximity. Success looks like a top-10 ranking and a click-through to your site. AI search optimization, also called Generative Engine Optimization (GEO), operates on entirely different logic. LLMs don't "rank" pages. They synthesize answers from the sources they deem most factually credible, then decide whether to cite your brand, name a competitor, or skip your category entirely.

The data is stark. In July 2025, 76% of AI-cited sources appeared in Google's top-10 results. By February 2026, that overlap had collapsed to just 17% to 38%, depending on the platform. Put differently: between 62% and 83% of what AI engines are recommending right now exists in a complete blind spot for traditional SEO tools. Around 31% of AI-cited pages don't even rank in the top 100 organic results.

That's not a ranking problem. That's a measurement gap.

The underlying mechanism is called "query fan-out." When someone types a prompt into Perplexity or ChatGPT, the model doesn't just search for that string. It decomposes the query into a cluster of related sub-queries, then retrieves answers from across the indexed web. Pages that rank for those hidden sub-queries are 161% more likely to be cited than those ranking only for the primary head term. Your domain authority doesn't predict this. Your backlink count doesn't either.

What a Real AI Search Optimization Platform Actually Tracks

Search "best GEO platform" and you'll find tools claiming to track AI visibility. Many of them monitor a single engine, usually ChatGPT, and call it done. That's not AI search optimization. That's a partial snapshot of one model's behavior on one day.

Here's why that matters: only 11% of sites are cited by both ChatGPT and Perplexity for the same query. Each model has its own "trust profile," its own citation patterns, and its own way of characterizing brands. A platform that only monitors ChatGPT is missing the majority of the story.

An enterprise-grade AI search optimization platform needs to track across at least four to five major engines: ChatGPT, Google Gemini, Perplexity, DeepSeek, and increasingly Grok. Each captures a different audience segment. Perplexity's user base skews heavily toward senior leadership: 30% are in C-suite or VP-level roles, and 65% work in high-income white-collar professions. For B2B brands, a citation on Perplexity may be worth more than a broader presence elsewhere.

Topify is built around a seven-dimension monitoring framework that measures what actually drives model citations: Visibility (how often the brand appears), Sentiment (how the model characterizes it), Position (whether it's the first recommendation or the fifth), Source Attribution (cited with a link vs. just mentioned), Feature Coverage (accuracy of how the AI describes capabilities), Security Credibility (perception of data protection), and Citation Growth (velocity of new citations over time). That last one is a leading indicator. If citation growth is flat, your GEO strategy isn't compounding.

The 3 Evaluation Criteria Most Teams Skip When Choosing a Platform

Most platform evaluations focus on dashboards and pricing tiers. The criteria that actually determine whether a platform delivers ROI are usually buried in the technical documentation.

1. Integration Stability: Can It Keep Up With AI's Volatility?

AI engines are not stable infrastructure. Google's AI Overviews fluctuated from appearing in 25% of queries down to 16%within a single quarter in 2025. A platform built to track AI visibility needs to handle that volatility without generating false signals or requiring manual recalibration every month.

The real test of integration stability isn't whether the API documentation is clean. It's whether the platform can connect to your CRM and attribution stack to identify what the research calls "Answer-Assisted Leads": prospects who encountered your brand in an AI response and went directly to your pricing page, bypassing your standard funnel. If your GEO platform can't surface that behavioral data, it's a dashboard, not a strategic asset.

That's the gap most teams discover six months after onboarding.

2. Security Measures: Your Prompt Library Is Strategic Intelligence

This is the evaluation criterion that enterprise buyers most consistently underestimate. When your marketing team inputs core brand prompts, competitor benchmarks, and positioning language into a third-party AI platform, you're handing over your strategic roadmap.

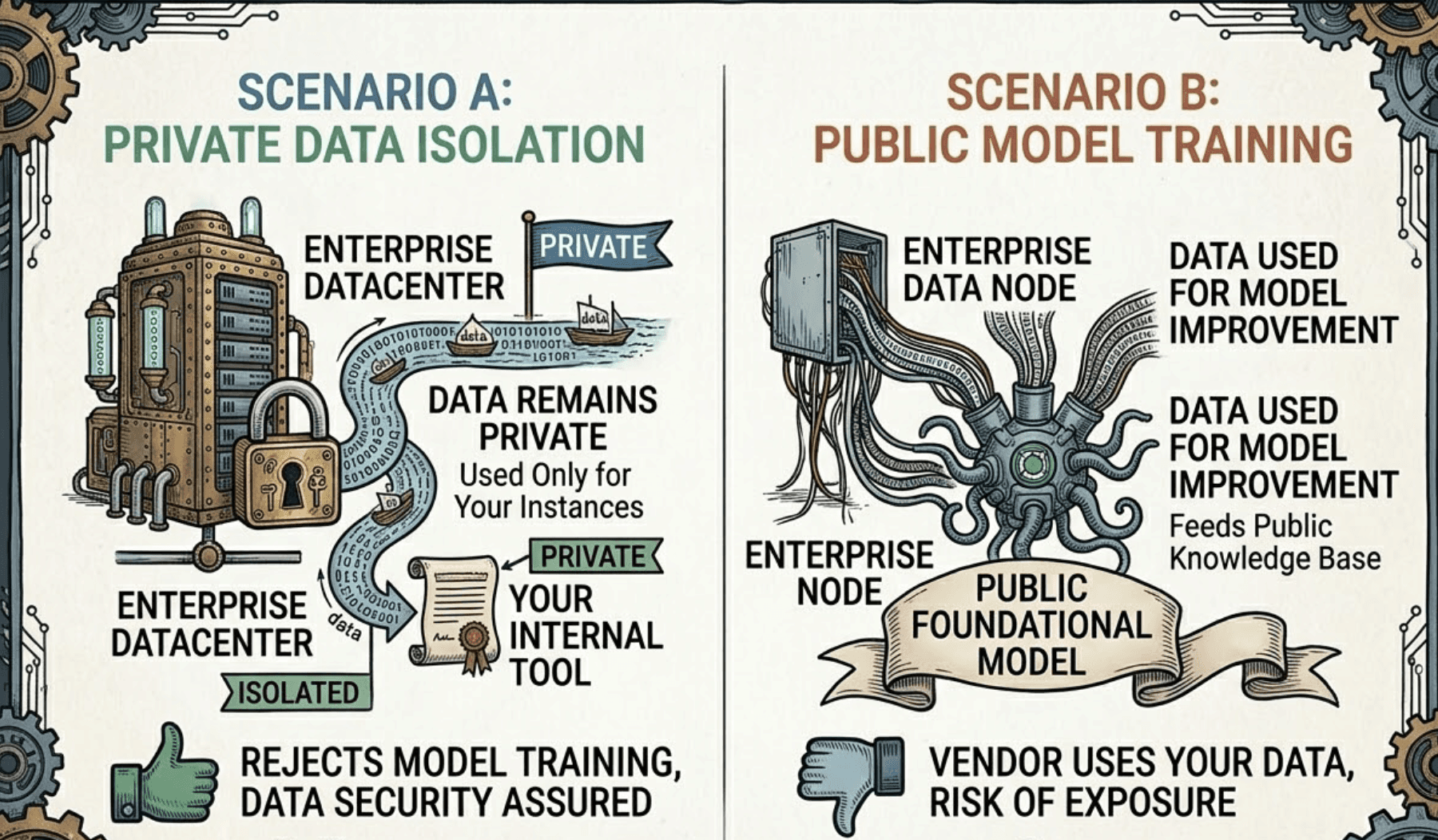

84% of professionals say they would reject a tool that uses their data for model training. The question to ask every vendor isn't "is my data secure?" It's "is my data isolated, and is it being used to improve your public models?"

Three specific risks to evaluate on any AI search visibility platform:

Data Training Risk: Is your brand's proprietary prompt library being used to refine the vendor's LLMs? A responsible platform offers explicit data isolation protocols, not just a checkbox in the terms of service.

Shadow AI Vulnerability: Are individual team members using "point solutions" (random AI tools) that might leak competitive intelligence? Centralized AI governance and role-based access control (RBAC) matter here.

Compliance Alignment: For regulated industries, ISO 42001 and SOC 2 alignment isn't optional. Ask for audit readiness documentation upfront, not after the procurement process has started.

Topify's Enterprise plan addresses these concerns through dedicated account manager oversight and data isolation infrastructure, ensuring that competitive intelligence entered into the platform stays within the organization's data perimeter.

3. Ease of Execution: How Long From Data to Action?

The "most straightforward AI search optimization platform" isn't the one with the cleanest UI. It's the one that closes the gap between insight and execution as fast as possible.

Traditional GEO workflows follow a four-step cycle: collect data, analyze manually, formulate strategy, update content manually. That cycle takes weeks. AI models update and re-index content daily. By the time a manual workflow completes one optimization pass, the model's citation patterns may have already shifted.

Research shows that 87% of the time required to build and maintain GEO tests is eliminated when moving from manual to automated workflows. Topify's One-Click Execution model works differently: you define your goals in plain language, the AI agent generates the optimized content adjustments and technical markup, and you deploy with a single click. No manual content cycle. By day 80 of using an automated platform, clients typically see a 97% increase in citation growth, a result that's nearly impossible to replicate through manual efforts.

AI Search Optimization GEO Platform vs. Traditional SEO: Run Both, But Don't Confuse Them

GEO doesn't replace SEO. The two channels operate in parallel, and you need both. What you can't do is use one to measure the other.

SEO logic: keywords + backlinks = click-through to site. GEO logic: entity clarity + factual density = citation in AI narrative. These are different incentive structures optimized by different signals. If your team tries to infer AI visibility from organic rankings, they'll have blind spots on 88% of the citations currently shaping your brand's reputation in AI responses.

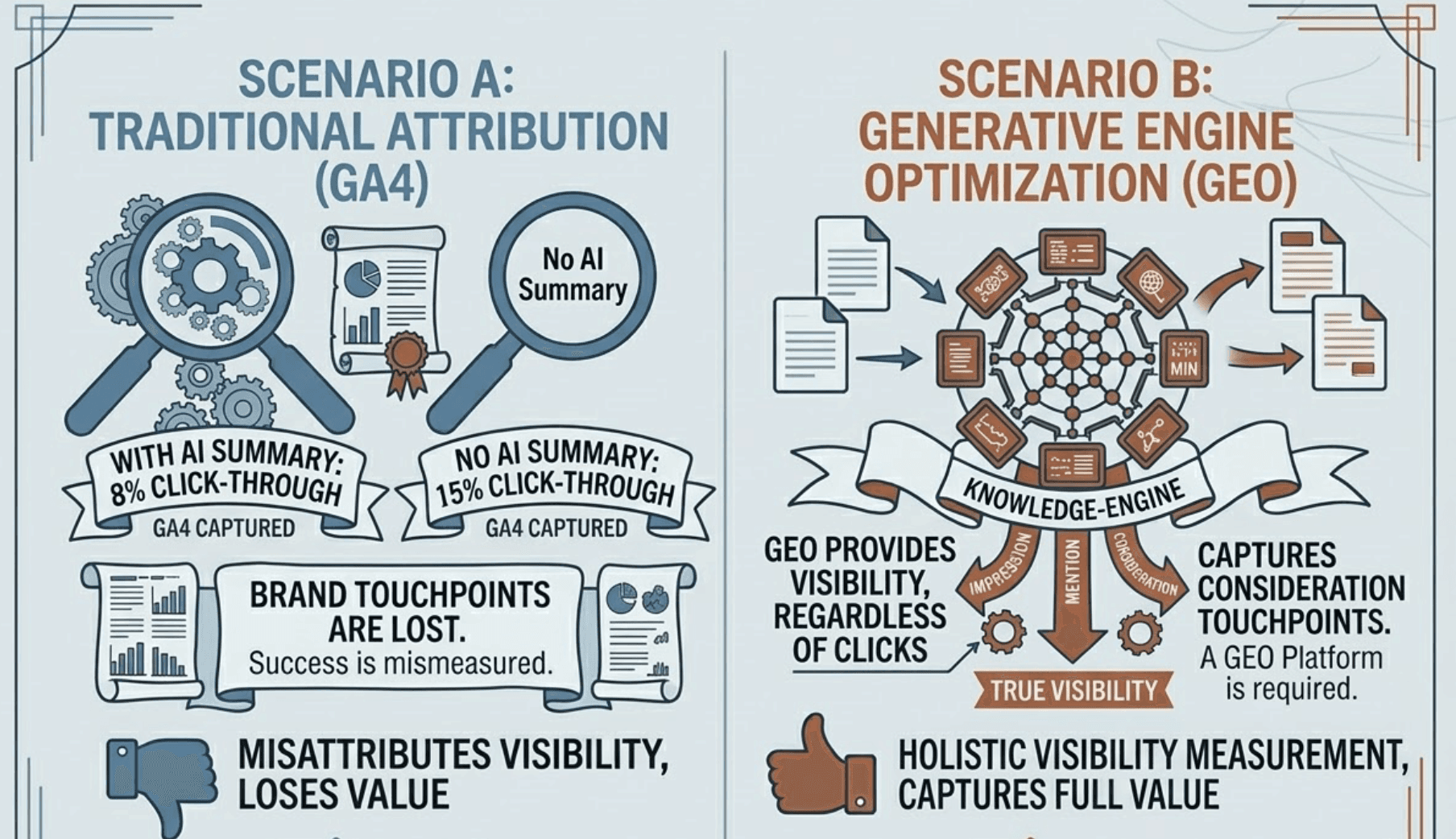

There's also the zero-click reality to account for. Studies of 68,000 searches found that when an AI Overview is present, only 8% of users click a link, versus 15% when no AI summary is shown. This means your brand can win a genuine consideration touchpoint in the buyer's journey without a single session appearing in GA4. Traditional attribution models won't capture it. A GEO platform will.

The "dual-track" framework for marketing leads looks like this: use your traditional SEO stack to manage organic rankings and drive traffic. Use a dedicated AI search optimization platform like Topify to manage what the research calls "Machine-Readable Trust": the signals that determine whether an AI engine cites your brand, characterizes it positively, and places it first in a recommendation list. These are parallel disciplines, not competing ones.

There's also the content format question. AI models are hallucination-averse. They preferentially cite content that provides quantifiable data they can verify. Adding statistics to a page yields a 41% improvement in AI visibility. Adding direct quotations improves it by 37%. Technical source attribution yields a 115% improvement for content ranking in position five. GEO rewards data density. Traditional SEO often rewards descriptive prose. Different optimization targets.

How to Choose an AI Search Optimization Platform: A Practical Framework

The selection process matters as much as the platform itself. Here's how to evaluate options without getting distracted by dashboard aesthetics or feature lists.

Step 1: Map your AI engine coverage needs. Start with your audience. If you're B2B and enterprise-focused, Perplexity's leadership-heavy demographics make it a priority. If you're consumer-facing, ChatGPT's 800 million weekly active users can't be ignored. Don't let a vendor sell you a platform that covers one engine and calls it multi-platform.

Step 2: Verify data isolation before the demo ends. Ask the specific question: "Is my brand's prompt data used in any form to train or fine-tune your public or shared models?" Get the answer in writing. If the vendor can't answer clearly, that's the answer.

Step 3: Test the execution path, not just the reporting. Most platforms can show you what's happening. Fewer can help you act on it within the same session. Ask for a live walkthrough of how a GEO strategy goes from insight to deployed content. If the answer involves a multi-week manual workflow, you're buying a reporting tool, not an optimization platform.

Step 4: Evaluate real-time competitor tracking. AI recommendation patterns shift when competitors publish new content, earn citations from new domains, or shift their positioning. A platform needs to surface these changes as they happen, not in a weekly digest. Topify's Dynamic Competitor Benchmarking tracks who AI engines recommend in real time, so you can identify when a rival gains ground and respond before the gap compounds.

Topify covers ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms across global markets. It's trusted by 50+ enterprises and startups, and its Basic plan starts at $99/month, covering 100 prompts and 9,000 AI answer analyses across core platforms. The Enterprise plan, starting at $499/month, adds dedicated account management and the data isolation infrastructure that regulated industries require. Start with a 30-day trial to benchmark current AI visibility before committing to a full deployment.

Conclusion

The divergence between organic rankings and AI citations isn't a temporary anomaly. It's the new baseline. As of February 2026, between 62% and 83% of what AI engines are recommending falls outside the visibility of traditional SEO stacks. That gap will widen, not narrow, as LLMs become the default interface for product discovery.

The practical starting point isn't overhauling your content strategy. It's establishing a baseline: which AI engines are citing your brand, what they're saying about it, and where competitors are already winning the AI recommendation. You can't optimize what you're not measuring.

Traditional SEO built your discoverability on Google. An AI search optimization platform builds your discoverability in the conversations that increasingly happen before a buyer ever runs a search.

FAQ

Q: What is AI search optimization and how is it different from SEO? A: AI search optimization (GEO) focuses on influencing how LLMs like ChatGPT and Gemini cite and characterize your brand within generated responses. Traditional SEO optimizes for crawler-based ranking signals like backlinks and keyword proximity. By February 2026, only 17-38% of AI-cited content overlaps with Google's top-10 results, meaning the two disciplines now measure and optimize fundamentally different things.

Q: What security measures should an AI search optimization platform have? A: Look for explicit data isolation protocols (your prompt library and competitive intelligence should never be used to train or refine public models), role-based access control (RBAC) to prevent shadow AI leakage, and SOC 2 or ISO 42001 alignment documentation. 84% of professionals say they'd reject a tool that uses their data for model training, so get the vendor's data policy in writing before onboarding.

Q: How do I evaluate integration stability for a GEO platform? A: True integration stability goes beyond API documentation. Test whether the platform can connect to your CRM and web analytics to identify "Answer-Assisted Leads," prospects who discovered your brand through an AI response and skipped standard top-of-funnel content. If the platform can't surface that behavioral data, it can't close the attribution gap between AI visibility and pipeline impact.

Q: Is there a straightforward AI search optimization platform for non-technical marketing teams? A: Yes. The key differentiator is whether the platform bridges the gap between data and action. Topify's One-Click Execution lets non-technical users define goals in plain language and deploy optimized content strategies without manual workflows. Clients using automated execution typically see a 97% increase in citation growth by day 80, compared to manual GEO efforts that take months to produce measurable results.