Your domain authority is solid. Your rankings held through the last core update. But when a potential customer asks ChatGPT for a tool recommendation in your category, your brand doesn't come up once. Traditional SEO metrics can't explain that gap, because they weren't designed to measure it.

That's the core tension facing most marketing teams right now. The dashboards look fine. The AI doesn't agree.

When Your Traffic Dashboard Stops Telling the Truth

The unreliability isn't a bug in your reporting stack. It's structural.

Approximately 60% to 69% of Google searches now end without a user clicking through to any website. AI Overviews synthesize answers directly on the search page — your content may be used as source material, but the user never arrives. Impressions go up. Traffic doesn't follow.

This is what researchers call the "Crocodile Effect": a brand's presence widens as its content gets consumed by AI summaries, while actual clicks narrow or disappear. A citation in an AI response still shapes brand consideration and purchase intent. It just doesn't register in your click-through rate.

For brands still optimizing around CTR as their primary success metric, this means a significant portion of pre-purchase influence is now happening entirely off-dashboard.

Feature | Traditional Search Visibility | AI-First Search Visibility |

|---|---|---|

Primary Output | Ranked list of links | Synthesized narrative answer |

Success Metric | Click-Through Rate | Mention Rate and Citation Share |

User Interaction | Scanning and selecting links | Trusting a direct recommendation |

Discovery Mechanism | Keyword matching and backlinks | Semantic patterns and entity clarity |

Buyer Journey | Multi-click research | Compressed, conversational evaluation |

The buyer journey is also getting shorter. Instead of searching, clicking, reading, and comparing across five tabs, users now engage in conversational evaluation with AI acting as an expert filter. Industry data suggests 25% of B2B buyers already use generative AI over traditional search for vendor research. That number is climbing. If your brand is excluded from the AI-generated shortlist, you're invisible at the most critical stage of the decision.

What AI Brand Visibility Actually Measures

AI brand visibility isn't a single number. It's a multi-dimensional construct that quantifies how frequently, accurately, and favorably a brand appears within generative AI environments.

Here's the thing: traditional SEO focuses on positions #1 through #10. AI visibility focuses on six distinct dimensions that together determine your brand's "Share of Model."

Mention Rate is the raw signal — the percentage of sampled AI responses that reference your brand. Low mention rate means the AI doesn't recognize you as a relevant player in your category. It's the foundational metric. If it's near zero, nothing else matters yet.

Average Position in Narrative matters because not all mentions are equal. Being cited first as the primary recommendation carries significantly more weight than appearing as "another option worth considering" near the end of a response. Position is the AI equivalent of #1 vs. #7 on a SERP.

Sentiment and Contextual Framing goes beyond positive vs. negative. AI models describe brands with specific adjectives and contextual nuances. A high mention rate can still damage a brand if the AI consistently frames it as "outdated" or "expensive." Aspect-based sentiment tracking catches these framings before they compound.

Citation Source and Attribution Accuracy measures how often an AI engine credits your website as the authoritative source for a fact or recommendation. It also checks attribution accuracy — whether the AI is correctly representing your pricing, features, and positioning, or quietly misquoting you.

Prompt Coverage and Intent Width evaluates the breadth of your visibility across different types of user questions. High coverage means your content is robust enough to satisfy various user intents. Gaps in coverage reveal the specific prompt categories where a competitor is dominating the conversation.

Competitor Gap and Share of Voice aggregates all the above into a relative benchmark. AI Share of Voice uses a weighted formula where the position and frequency of mentions combine into a composite dominance score, giving marketing teams a single number to track over time.

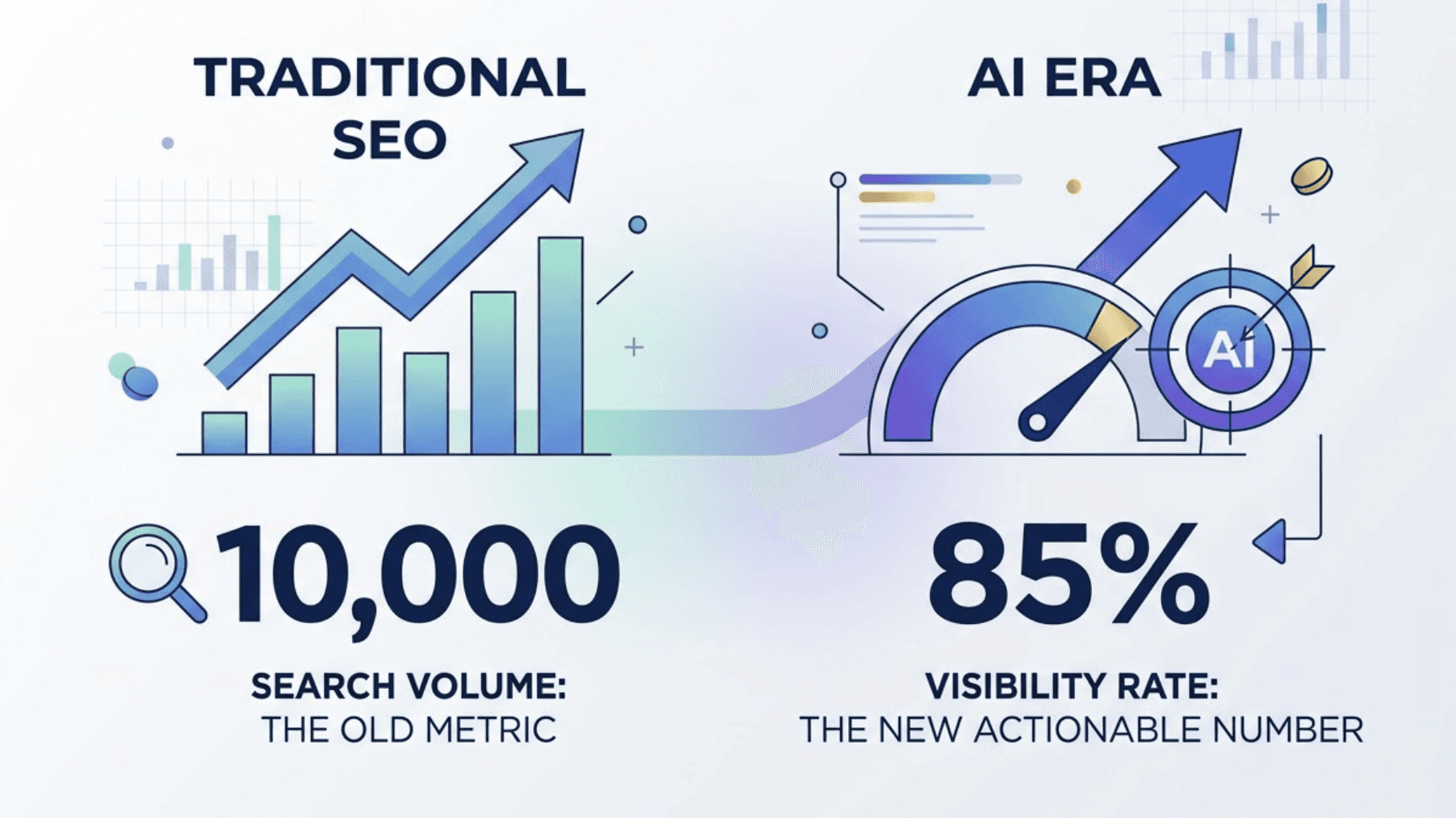

Visibility Rate vs. Search Volume: Two Different Numbers

In the traditional SEO era, search volume was the planning metric. It told you how many people were searching for a keyword. In the AI era, visibility rate is the more actionable number.

The difference is significant. A keyword might have 10,000 monthly searches, but if AI tools only mention your brand in 2% of those responses, the volume is irrelevant to your brand. Conversely, a 90% visibility rate for a specific long-tail query with lower search volume and high purchase intent is often more valuable. AI responses tend to convert at 4 to 6 times the rate of traditional search results — a high-intent, high-visibility combination compounds quickly.

Metric | Context | Strategic Goal |

|---|---|---|

Search Volume | How many people ask? | Market size and reach |

Visibility Rate | Do you exist in the answer? | Inclusion in the consideration set |

Sentiment Score | How are you described? | Reputation and trust management |

Citation Share | Are you the source of truth? | Establishing topical authority |

Why Sentiment Matters as Much as Frequency

Most brands first start measuring AI visibility by checking if they appear at all. That's a reasonable starting point. But brands that stop there often miss a more damaging problem.

AI models don't just name brands — they characterize them. If ChatGPT consistently describes your product as "a solid budget option" while your positioning is enterprise-grade, you have a sentiment problem that mention rate alone won't reveal. The goal isn't just to appear in AI answers. It's to appear accurately, in the right context, with the framing that matches your market position.

How Search Marketing Visibility Evolves in an AI-First Search Landscape

The shift from traditional search to AI-first search is best understood as a transition from Search Engine Optimization to Generative Engine Optimization (GEO). The two disciplines share some overlap, but their core logic is different enough that an SEO-only strategy will leave measurable gaps.

Three specific differences define the transition.

Retrieval vs. Synthesis. AI SEO optimizes for an algorithm to find a page and place it in a ranked list. GEO optimizes for a model to read the page, extract facts, and re-synthesize them into a new answer. What gets extracted depends on how cleanly the information is structured, not just how authoritative the domain is.

Backlink Authority vs. Consensus Authority. In traditional SEO, a backlink is a vote for a page. In GEO, authority is derived from web-wide consistency. If your brand is described similarly across Wikipedia, industry publications, Reddit, and your own site, the AI model gains confidence in the information. A strong domain with isolated coverage performs worse than a brand with consistent, distributed mentions.

Page Coverage vs. Fact Extractability. Traditional SEO rewards comprehensive, long-form content that covers every angle of a topic. GEO rewards "extractable blocks" — short, factual sections that directly answer a specific question, often structured in a "Bottom Line Up Front" format that retrieval-augmented generation (RAG) systems can parse efficiently.

Discipline | Core Optimization Unit | Primary Authority Signal | Primary Content Format |

|---|---|---|---|

SEO | Individual Web Page | Backlinks and Domain Rating | Comprehensive Guides |

GEO | Factual Entity or Statement | Web-wide Consensus | Extractable Factual Snippets |

AEO | Specific Direct Answer | Structured Data | Q&A Blocks and Tables |

On the flip side, this evolution doesn't make traditional SEO obsolete. It creates a two-layer strategy: SEO handles technical health and link-based authority; AI search optimization handles the semantic and citation layer that shapes how AI models perceive and recommend your brand.

Long-tail keywords are also changing roles. In traditional SEO, they captured niche traffic with lower competition. In AI search, they map to conversational prompts. The average AI prompt is approximately 23 words, compared to a 4-word traditional search query. That density requires content that is not just keyword-rich but conceptually dense enough to satisfy complex, multi-part user questions in a single response.

The 3 Blind Spots Most Brands Have in AI Search Right Now

Despite the clear shift in search behavior, many brands remain invisible to AI engines because of specific, correctable patterns in their digital strategy. These aren't random gaps — they tend to cluster around three predictable blind spots.

Blind Spot 1: Vague Positioning. AI models are pattern-matching engines that rely on clarity to categorize brands. If your company describes itself using generic terms like "innovative," "leading," or "solution-oriented," the model cannot semantically distinguish you from thousands of competitors. When asked for a recommendation, AI prioritizes brands with compressible positioning — those that can be described in a single, distinct sentence. The more specific the positioning, the more consistently the AI can identify when a user's query matches your brand.

Blind Spot 2: The Owned-Media Authority Silo. Traditional SEO focuses heavily on a brand's own website. But AI engines derive knowledge from a diverse array of sources: Reddit threads, industry forums, news sites, analyst reports, and third-party reviews. A brand can rank #1 on Google and still be ignored by ChatGPT if it lacks external validation. Research indicates that brands appearing in third-party mentions are 6.5 times more likely to be cited in AI answers than those relying primarily on their own domains.

Blind Spot 3: Content Freshness Decay. AI models with live-web retrieval — Perplexity, Google Gemini — place a premium on recency. Content that hasn't been updated in over 12 months is often treated as decayed, regardless of its backlink profile. Brands frequently disappear from AI Overviews not because their content is wrong, but because it lacks updated statistics, current dates, or references to recent developments. Freshness signals matter to AI retrieval in ways they never mattered for traditional SERP ranking.

That's the gap most brands still can't see.

What an AI Visibility Platform Does That Your SEO Suite Doesn't

The tools most teams use for SEO — keyword rank trackers, backlink auditors, traffic dashboards — weren't built to measure model behavior. They track what happens on web pages. An AI visibility platform tracks what AI engines say about your brand and why.

Tracking Across Platforms Is Not Optional

AI search is not a single channel. ChatGPT, Gemini, Perplexity, DeepSeek, and others all maintain different training data and retrieval logic. Your brand might appear consistently in ChatGPT responses but rarely in Perplexity. The reasons for that gap — different citation sources, different semantic associations, different update frequencies — won't be visible in a single-platform report.

Topify tracks brand performance across seven key metrics (visibility, sentiment, position, volume, mentions, intent, and CVR) simultaneously across major AI platforms. In practice, this means a marketing team can see a drop in Gemini mentions, trace it to a specific content category that lost freshness signals, and address it directly — rather than running separate manual tests on each platform.

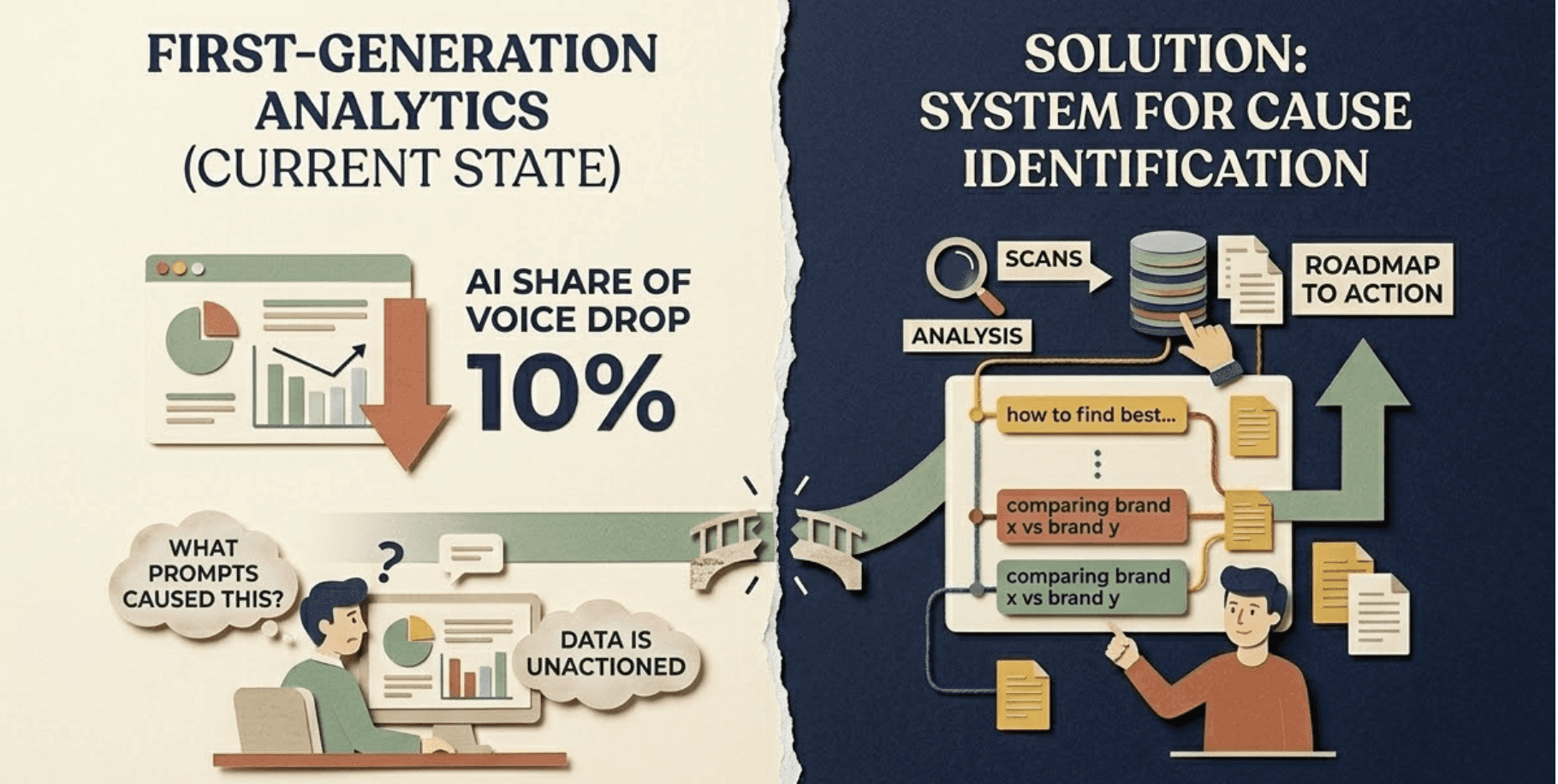

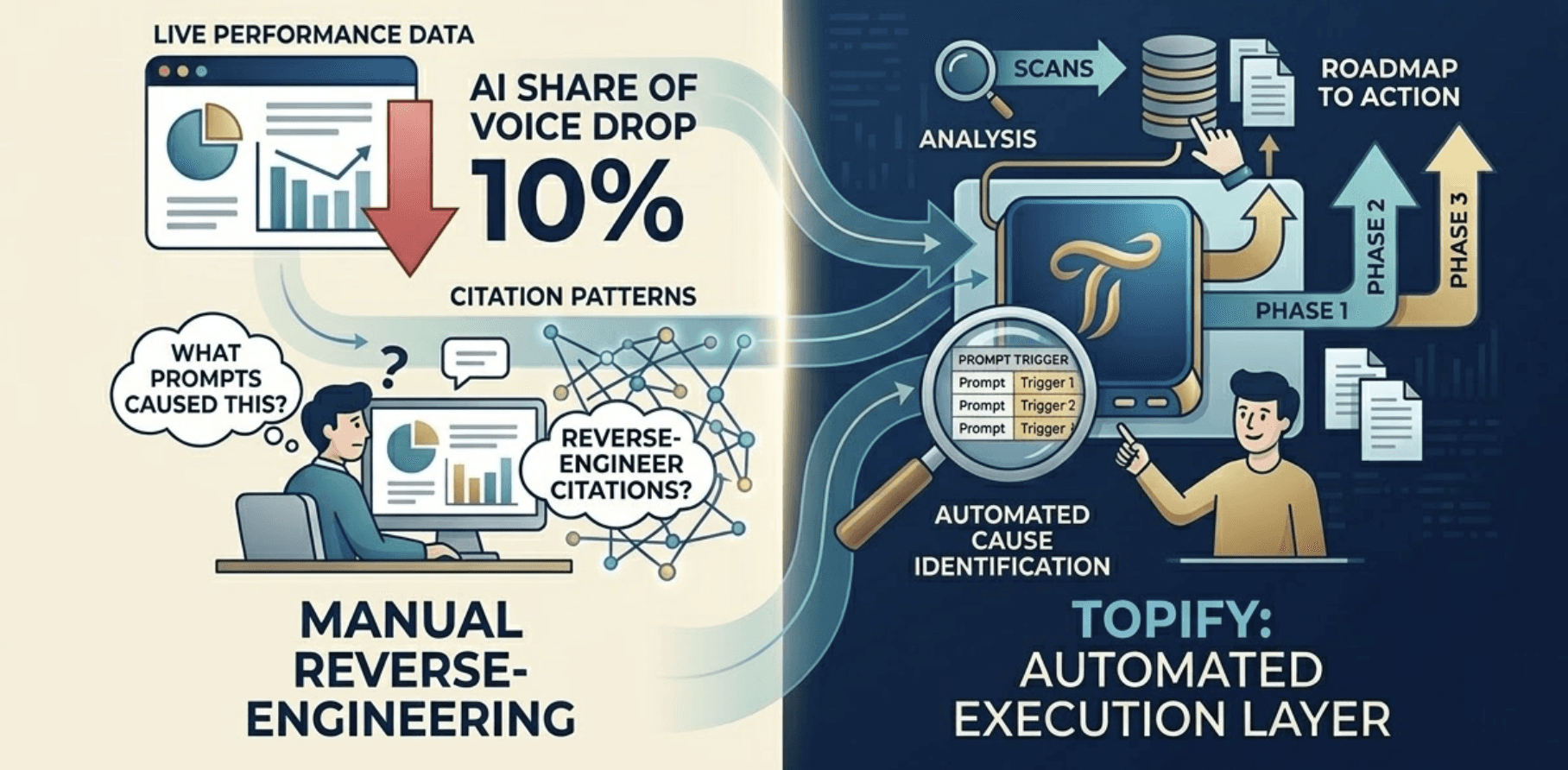

From Data to Action: The Gap Most Tools Don't Close

The primary limitation of first-generation AI search analytics tools is that they provide monitoring without a roadmap. A brand sees its AI Share of Voice drop by 10%, but without a system that identifies which specific prompts triggered the drop, the data sits unactioned.

The more useful approach is an execution layer that connects AI search intelligence to content and strategy decisions. Topify's platform bridges this by identifying "explicit opportunities" — specific prompts where a competitor is cited for a high-value query — and surfacing the content and structural changes most likely to shift that outcome. The one-click execution model means teams can define their optimization goals in plain language and deploy the strategy without building manual workflows from scratch.

Building a Measurement Framework for AI Brand Visibility

Ad-hoc prompting — manually checking whether your brand appears in a few ChatGPT queries — is not a measurement framework. It's a spot check. Brands that want to manage AI search analytics systematically need four core indicators and a structured implementation cycle.

AI Inclusion Rate (AAIR): The percentage of tracked industry prompts where your brand appears in the synthesized answer. This is the North Star metric. Industry benchmarks suggest 20% to 40% for non-branded terms in competitive categories.

Citation Attribution Rate (CAR): How often the AI explicitly links to your domain as a source. This drives pre-qualified referral traffic and establishes topical authority. Category leaders typically achieve 40% to 70% citation share for their core topics.

Sentiment Polarization Ratio: The distribution of positive vs. neutral/negative framing in brand mentions. A positive-to-neutral ratio of at least 70/30 is a reasonable baseline goal for brands actively managing their AI presence.

Prompt Intent Coverage: Whether your brand appears across all five search stages (informational, navigational, transactional, comparison, and implementation). Coverage below 50% across the buyer journey suggests significant blind spots in your content architecture.

For teams starting from zero, a 90-day implementation cycle is a practical starting point. The first 30 days focus on establishing a baseline — testing your top 20 business-critical queries across ChatGPT, Perplexity, and Gemini to calculate your current AI Share of Voice and identify the competitors the AI currently favors. Days 31 to 60 shift to content restructuring: rewriting key pages into BLUF formats, adding structured schema markup, and using AI search analytics to cluster queries into topical authority hubs. The final 30 days build external consensus through digital PR, community engagement, and coverage on the third-party sources AI engines cite most.

Topify functions as the execution layer across all three phases — connecting live performance data to specific optimization actions, without requiring teams to manually reverse-engineer AI citation patterns on their own.

Conclusion

AI brand visibility and traditional SEO visibility are not the same metric, and treating them as interchangeable is increasingly costly. A brand can hold strong Google rankings while being absent from every AI-generated recommendation in its category. The inverse is also possible and growing more common.

The brands closing this gap fastest aren't the ones with the highest domain authority. They're the ones that started measuring AI-specific signals early, built content structured for extractability rather than just comprehensiveness, and established the external consensus that gives AI models confidence to recommend them. That's a different optimization discipline. It starts with knowing where you stand, and what the AI is actually saying about you right now.

FAQ

Q: What is AI brand visibility, and how is it different from SEO rankings?

A: AI brand visibility measures how frequently, accurately, and favorably a brand appears in AI-generated responses from platforms like ChatGPT, Gemini, and Perplexity. Unlike SEO rankings, which track a URL's position in a list of links, AI visibility tracks whether a brand is mentioned in synthesized answers, how it's described, and whether it's recommended over competitors. The two metrics can move in opposite directions — a brand can rank well in Google while being largely absent from AI answers.

Q: How does search marketing visibility evolve in an AI-first search landscape?

A: The core shift is from competing for a ranked link to competing for inclusion in a synthesized response. Traditional search visibility was measured through impressions and clicks. AI-first search visibility is measured through mention rate, citation share, sentiment framing, and prompt coverage. The optimization logic also changes: backlink authority matters less than web-wide consensus, and long-form content matters less than clearly extractable, factual content blocks. AI search optimization (GEO) doesn't replace SEO — it adds a second measurement and strategy layer.

Q: What metrics should I track to measure AI search visibility?

A: Four core indicators cover most use cases: AI Inclusion Rate (AAIR), which measures how often your brand appears in relevant AI responses; Citation Attribution Rate, which tracks how often AI engines cite your domain as a source; Sentiment Polarization Ratio, which monitors how the AI frames your brand; and Prompt Intent Coverage, which checks whether your brand is visible across the full buyer journey, not just top-of-funnel queries.

Q: Why is my brand not showing up in ChatGPT even though my SEO is strong?

A: There are three common reasons. First, vague positioning makes it hard for AI models to associate your brand with specific user needs. Second, AI engines rely on external validation from third-party sources like industry publications, forums, and reviews — a strong website alone isn't sufficient. Third, content freshness matters significantly: pages not updated in 12+ months are often treated as lower-quality sources by AI retrieval systems, regardless of their backlink profile.